Google’s latest entry, Gemini, is set to redefine the AI hierarchy, challenging OpenAI’s GPT-4 and GPT-3.5 with its advanced multimodal functions. It’s not just a new contender; it’s a bold step up, potentially outsmarting human experts in some arenas.

But is Gemini more than just buzz?

This announcement has set the stage for an exciting future in AI development, with Google claiming a significant leap forward with Gemini Ultra.

However, with Project Q-Star on the horizon, the true comparison and implications of this advancement remain to be seen. Let’s dive in.

1 What is Google Gemini :

The Gemini AI models developed by Google represent a significant advancement in the field of multimodal AI systems. These models, comprising Gemini Ultra, Pro, and Nano, are designed to handle a variety of complex tasks across multiple domains including image, audio, video, and text understanding.

Here’s a comprehensive overview of the Gemini paper report:

- Gemini Models :

- Multimodal Integration: Trained across text, image, audio, and video, Gemini models exhibit strong generalist capabilities and specialized performance in each domain.

- Size Variants: Gemini Ultra for complex tasks, Pro for performance and scalability, Nano for on-device applications.

- Native Multimodality: Capable of seamlessly combining capabilities across modalities, excelling in tasks involving cross-modal integration.

- Technical Architecture and Training:

- Transformer-Based: Utilizes enhanced Transformer decoders for scalable training and optimized inference on Tensor Processing Units.

- Infrastructure: Trained using TPUv5e and TPUv4, with sophisticated network infrastructure supporting model parallelism and data parallelism.

- Dataset: A multimodal and multilingual dataset comprising web documents, books, code, images, audio, and video. Employing quality filters and safety mechanisms for data integrity.

- Effective Context Utilization: Supports a 32k token sequence length, effectively utilizing long context for retrieval and understanding tasks.

- Performance and Benchmarks:

- State-of-the-Art Achievements: Gemini Ultra advanced the state of the art in 30 of 32 benchmarks, including being the first to achieve human-expert performance on MMLU.

- Diverse Capability Assessment: Evaluated across more than 50 benchmarks in areas like factuality, long-context understanding, math/science, reasoning, and multilingual tasks.

- Multilingual Excellence: Demonstrates superior performance in machine translation, even in low-resource languages, outperforming other leading models like GPT-4 and PaLM 2.

- Nano Models’ Proficiency: Despite smaller sizes, Gemini Nano models show strong performance in summarization, reading comprehension, reasoning, STEM, coding, multimodal, and multilingual tasks.

- User-Centric Evaluations and Applications:

- Human Preference Validation: Instruction-tuned Gemini Pro models outperform others in creative writing, instruction following, and safety, indicating a user-friendly and safer AI experience.

- Advanced Reasoning Systems: Gemini Pro underpins AlphaCode 2, a system excelling in solving competitive programming problems, highlighting Gemini’s role in complex problem-solving scenarios.

- Multimodal Understanding and Generation:

- Varied Capability Analysis: Evaluated for high-level object recognition, fine-grained transcription, chart understanding, and multimodal reasoning without relying on external OCR tools.

- Cross-Modal and Multilingual Operations: Demonstrates proficiency in image understanding tasks and generation tasks across multiple languages.

The Gemini era is here. Thrilled to launch Gemini 1.0, our most capable & general AI model. Built to be natively multimodal, it can understand many types of info. Efficient & flexible, it comes in 3 sizes each best-in-class & optimized for different uses https://t.co/VUu1277bC2 pic.twitter.com/pKyBxXwdYw

— Demis Hassabis (@demishassabis) December 6, 2023

2 Is Bard Using Gemini Pro?

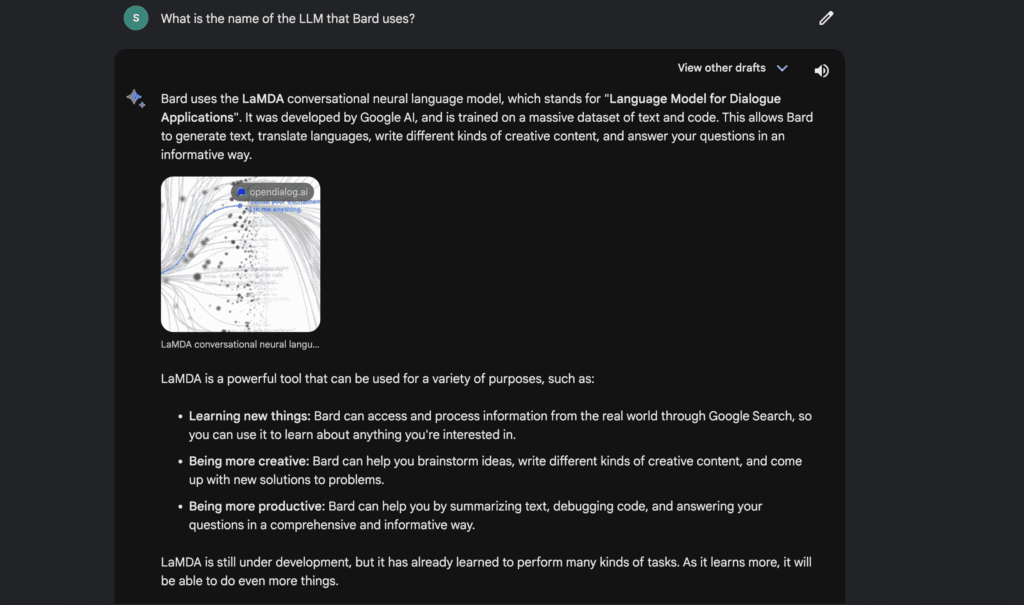

I’ve got the latest scoop on Google’s Bard and you’re going to want to hear this. It’s about what’s under the hood of this slick AI. So, I did what any curious cat would do I asked Bard itself. Not once, but twice, because consistency is key, right?

First up, Bard told me it’s all powered by LaMDA, which is Google’s Language Model for Dialogue Applications. LaMDA’s the cool kid on the block, designed to chat and assist like no one else.

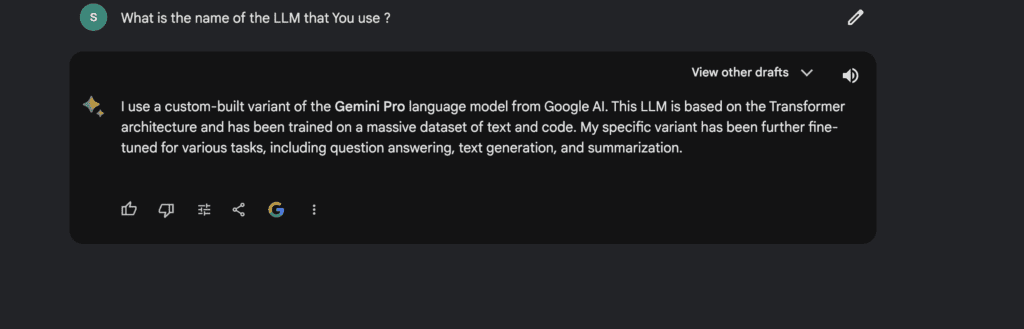

But then, I did a little twist on my question and Bard switched gears, talking about a custom-built variant of the Gemini Pro language model. Talk about a plot twist!

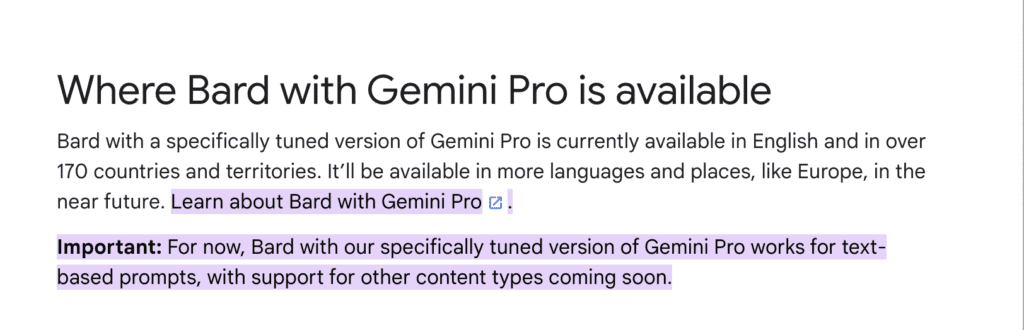

Now, this is where it gets even more interesting. Google’s own support page backs up the Gemini Pro story, saying Bard with Gemini Pro is out there, chatting away in English in a boatload of countries. They’re planning to add more languages to its repertoire, spreading those chatty vibes far and wide.

So, why the mixed messages, Bard? Is it LaMDA? Gemini Pro? A bit of both? It’s like Bard has a bit of an identity crisis, or maybe it’s just flexing the range of its AI muscles.

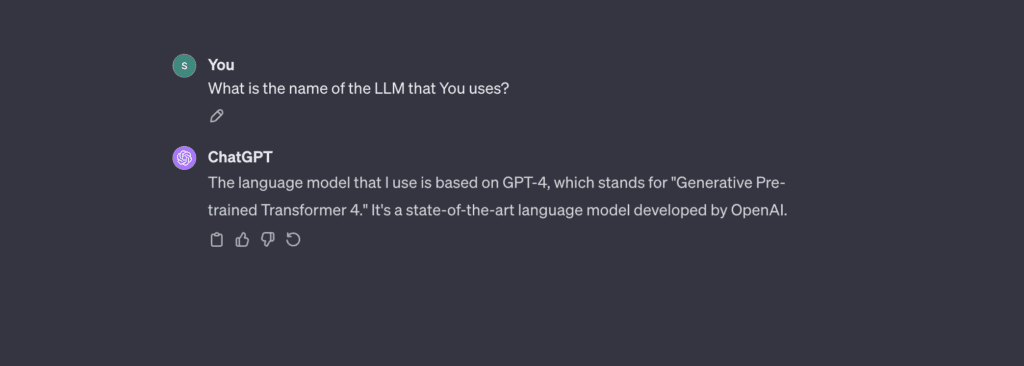

To get another perspective, I turned to ChatGPT, the brainchild of OpenAI. I asked both GPT-3.5 and GPT-4 the same thing, and guess what?

They were clear as crystal about their architecture.

This left us with more questions than answers. Is it a case of the left hand not knowing what the right hand is doing, or is it a deliberate move to keep us guessing? or just the nature of the AI beast? One thing’s for sure, Google got us talking, and maybe that’s the point.

3 How To access Gemini in Bard ?

To access Gemini in Bard, you’ll need to follow these steps:

- Make sure you have a Google account. If you don’t have one, you can create one for free.

- Visit the Bard website. You can access Bard through your web browser at https://bard.google.com/

- Log in with your Google account.

- Start typing your query or request. Bard will respond using Gemini Pro, one of the three variants of the large language model Gemini.

Important things to note:

- Currently, Bard with Gemini Pro is only available in English.

- Support for more languages and regions is coming soon.

- Currently, Bard with Gemini Pro only supports text-based prompts. Support for other content types will be added in the future.

4 Google Gemini Ultra Vs GPT-4

- General:

- MMLU: Gemini Ultra surpasses human-expert performance and outperforms GPT-4 in a holistic exam benchmark measuring knowledge across 57 subjects, with an accuracy of 90.0% using Chain of Thought (CoT) prompting strategy compared to GPT-4’s 86.4% using a 5-shot methodology.

- Reasoning:

- Big-Bench Hard: Both Gemini Ultra and GPT-4 have comparable performance on tasks requiring multi-step reasoning, with Gemini Ultra slightly ahead.

- DROP: Gemini Ultra shows competent performance on reading comprehension with a score of 82.4%.

- HellaSwag: Gemini Ultra achieves 87.8% on commonsense reasoning tasks, which is below GPT-4’s impressive 95.3%, although the prompting methods seem different and thus not directly comparable (10-shot+ for Gemini vs. 10-shot for GPT-4).

- Math:

- GSM8K: In basic arithmetic manipulations, Gemini Ultra outperforms GPT-4 by a small margin, achieving a 94.4% accuracy.

- MATH: On more challenging math problems, Gemini Ultra and GPT-4 show similar results, with Gemini Ultra slightly outperforming GPT-4.

- Code:

- HumanEval: Gemini Ultra significantly outperforms GPT-4 in Python code generation, with a 74.4% success rate on a 0-shot instruction-tuned task compared to GPT-4’s 67.0% on a 0-shot methodology.

- Natural2Code: Again, Gemini Ultra shows superior performance in Python code generation on a new benchmark, achieving 74.9% accuracy compared to GPT-4’s 73.9%.

5 Comparison:

| Feature | Gemini Ultra | GPT-4 |

|---|---|---|

| Multimodality | Ground-up design | Added to text-first model |

| Benchmark Performance | Exceeds GPT-4 and human experts on MMLU | Lower than Gemini Ultra on MMLU |

| Prompting Techniques | 32-sample Chain of Thought | 5-shot method |

| Context Window Size | 32k tokens | 128k tokens (effective ~64k) |

| Video Input Processing | Capable | Not capable |

| Developer Ecosystem | Not yet available | Well-established |

| Market Availability | Expected in future | Currently available |

| AI Arms Race Position | Leading in announcements | Leading in usable products |

6 My Perspective on AI Benchmarking:

- Performance: Gemini Ultra demonstrates its strength as a multimodal model by excelling in various text-based tasks. Notably, it shows a remarkable capacity to handle complex reasoning and achieves state-of-the-art performance in most benchmarks.

- Methodologies: The table includes footnotes like CoT32* and 0-shot (IT)*, indicating specific methodologies used for Gemini Ultra, such as Chain of Thought with 32 samples and 0-shot with instruction tuning. These methodologies may account for its strong performance .

It is essential to note the comparison with GPT-4 may not be entirely apples-to-apples due to different prompting methods and evaluation settings.

- Advancements: The results suggest that Google’s investment in Gemini Ultra’s architecture, training, and optimization has paid off, allowing it to achieve or surpass human-level performance on complex tasks, and represent a substantial leap in the capabilities of LLMs. However, the “Ultra” version of Gemini that Google claims can beat GPT-4. won’t be ready until some time in 2024.

7 Google Gemini Vs Openai GPT Pricing :

- Gemini’s Role in Google Products:

- Designed to integrate with Google’s services like Search and Chrome.

- Enhances functionality while using data for targeted advertising.

- User Accessibility:

- Currently free through select channels.

- Accessible via Bard and Pixel 8.

- Potential part of future premium services.

- OpenAI’s GPT Pricing Model:

- Free baseline service with registration.

- GPT Plus available for a $20 monthly subscription, including:

- Access to GPT-4.

- Options to create custom GPTs (may have additional costs).

- API Access:

- OpenAI provides a GPT API with a specific pricing model for developers and businesses.

- Google has not released a Gemini API yet, but an announcement is expected.

8 Was Google’s Gemini Demo Completely Fabricated?

In the realm of advanced technology, particularly Artificial Intelligence, demonstrations are crucial for showcasing capabilities and potential. The Google Gemini demo has ignited significant debate within the AI community about its authenticity, raising the question: Was it completely fabricated?

Analyzing AI Community Perspectives and Precedents

- Historical Skepticism: The AI community has drawn parallels to Google’s previous AI demonstrations, like Duplex, which, despite initial excitement, didn’t completely live up to its showcased potential. This history has led to a certain level of skepticism regarding the authenticity of subsequent demos, including Gemini.

- Mixed Experiences: Diverse experiences with Google’s past AI products contribute to this skepticism. While some in the AI community have had positive interactions with products like Duplex, others felt that the reality did not match the demonstrations.

Dissecting the Demo

- Critical Observations: The AI community scrutinizes demos like Gemini for editing, selective presentation, and potential exaggeration. It’s noted that aspects of the Gemini demo might have been enhanced or selectively presented to make the AI seem more advanced than its current state.

- Absence of Conclusive Proof: Importantly, there’s no concrete evidence proving the demo was entirely fabricated. The discussions in the AI community are largely based on assumptions and interpretations of the demo’s presentation, rather than definitive proof of fabrication.

Industry Practices and Marketing Strategies

- Comparative Analysis: In the tech industry, showcasing AI capabilities in the most favorable light, sometimes stretching the bounds of their current functionalities, is not uncommon. This practice is not unique to Google; other tech giants have faced similar scrutiny.

- Legal and Ethical Boundaries: Companies often walk a fine line between effective marketing and accurate representation. While they push boundaries to showcase potential, they typically stay within legal and ethical standards.

9 Conclusion

As we look ahead at what Gemini Ultra might bring, let’s not forget how quickly AI is changing. GPT-5 is just around the corner, and it’ll be a big deal for Gemini Ultra to fit in with what developers are already doing with OpenAI’s tools.

Google has done a lot with Gemini, but what really counts is how it works out there in the real world, when everyone starts using it.

The race in AI technology is moving fast, and 2024 looks like it’s going to be a year full of surprises. Gemini Ultra seems promising right now, but we’ll have to wait and see if it really changes the game as more and more smart AI comes out.

Read More : Google Gemini Ultra 1.0 Complete Review

Discussion about this post