Guess what? Sam Altman just revealed something awesome at the DevDay keynote ,it’s the open-source Whisper v3 from OpenAI. This isn’t just a step up from the already impressive Whisper v2; it’s like leaping into the future of speech recognition.

Think of large-v3 as your familiar speech-to-text tool, but supercharged and fluent in many languages. Now, that’s something, right?

But here’s the best part – you don’t need some high-end rig to use it. If you’re worried your setup might not keep up, we’ve got you covered with Replicate.

It’s your way to experience all the cool features of large-v3, no matter what tech you’ve got at home.

So, ready to see what Whisper large-v3 is all about? Let’s get into it and find out why it’s the talk of the town!

1 What is Whisper-v3?

Whisper-v3, introduced by OpenAI, represents a breakthrough in speech recognition technology. This advanced model, known as ‘large-v3,’ is built on the same architecture as its predecessor, Whisper v2, but with notable enhancements. Whisper-v3 operates with 128 Mel frequency bins, compared to the 80 used in earlier versions, and includes a new language token for Cantonese. It excels in understanding and transcribing a wide range of languages, making it a versatile tool for diverse applications in speech-to-text conversion.

2 How Does Whisper-v3 Enhance Speech Recognition?

Features and Training of Whisper-v3

- Advanced Architecture: Whisper-v3 maintains the same fundamental architecture as previous large models, offering a robust foundation for speech recognition.

- Increased Mel Frequency Bins: The model uses 128 Mel frequency bins instead of the 80 used in earlier versions, enhancing its audio processing capabilities.

- New Language Token: Includes a new language token for Cantonese, expanding its linguistic reach.

- Extensive Training Data: Trained on 1 million hours of weakly labeled audio and 4 million hours of pseudolabeled audio using Whisper large-v2, ensuring wide-ranging language and dialect coverage.

- Improved Error Rate Reduction: Shows a 10% to 20% reduction in error rates compared to Whisper large-v2, marking significant progress in accuracy.

- Multilingual and Multitask Training: The model is capable of both speech recognition and speech translation, trained on multilingual data for versatile applications.

- Predictive Capabilities: For speech recognition, it predicts transcriptions in the same language as the audio. For speech translation, it transcribes to a different language.

3 Whisper v2 vs Whisper v3: What Are the Key Differences?

Comparing the Performance and Capabilities

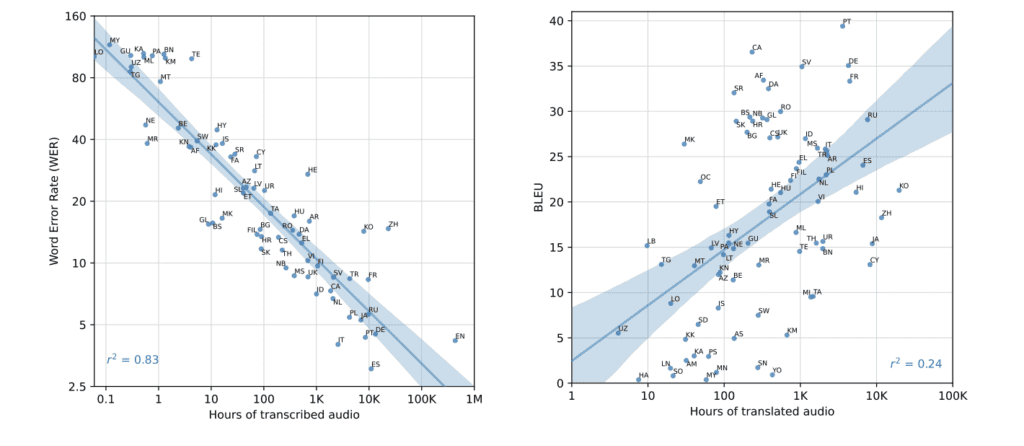

Based on the image provided, here are the key differences between Whisper-v2 and Whisper-v3 models as depicted in the performance comparison charts for Common Voice 15 and FLEURS datasets:

- Performance Metrics: The image shows a side-by-side comparison using bar graphs to represent Word Error Rate (WER) or Character Error Rate (CER) percentages for a wide range of languages.

- Error Rate Reduction: Across both datasets, Whisper-v3 generally has lower WER or CER percentages, indicating better performance and fewer errors in speech recognition across most languages.

- Language Coverage: Both versions of the model cover a multitude of languages, but Whisper-v3 shows improved error rates, reflecting advancements in the model’s ability to process and understand diverse languages and dialects.

- Top Performers: In the Common Voice 15 dataset, languages like Dutch, Spanish, and Korean show notably lower error rates with Whisper-v3 compared to Whisper-v2. Similarly, in the FLEURS dataset, Spanish, Italian, and Korean are among the languages with the most significant improvements.

- Range of Improvement: While the improvements vary by language, the trend is a clear reduction in error rates from v2 to v3. For some languages, the improvement is quite dramatic, while for others, it is more moderate.

- Consistency Across Datasets: The improvement trend is consistent across both the Common Voice 15 and FLEURS datasets, reinforcing the overall enhancements made in Whisper-v3.

4 What Are the Technical Requirements for Whisper-v3?

Addressing VRAM Requirements and Hardware Challenges

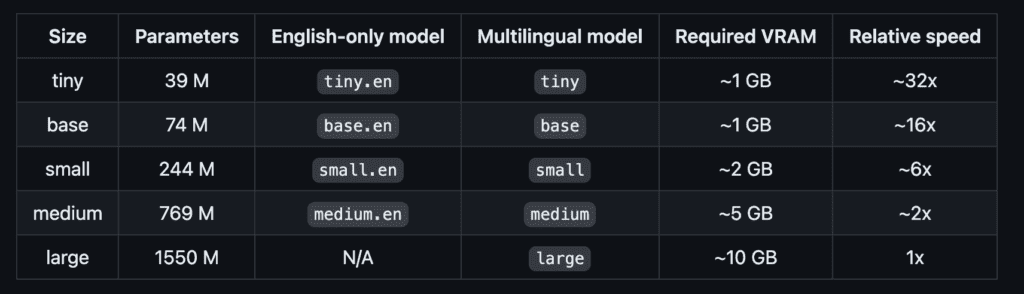

Alright, let’s break down the VRAM requirements for Whisper-v3 and discuss how users with limited hardware can still get in on the action.

So, Whisper-v3 is like the latest supercar in the world of speech recognition it’s powerful and speedy. But just like a supercar needs the right kind of fuel to run, Whisper-v3 needs VRAM, and plenty of it. The larger the model, the more VRAM it gulps down. Here’s what you’re looking at for each size:

- Tiny Model: Just a light snack of about 1 GB of VRAM.

- Base Model: Also pretty lean, needing around 1 GB.

- Small Model: Starting to get hungry with a need for around 2 GB.

- Medium Model: A solid 5 GB to keep it going.

- Large Model: The big eater, requiring about 10 GB of VRAM.

With each model size from tiny to large, the VRAM requirements ramp up, maxing out at a hefty 10 GB for the largest model. This can be a real roadblock for those running on more vintage setups, where even a robust i7 Intel CPU might stumble, coughing up FP16 warnings that essentially say, “I need more power!

But don’t sweat it ,there’s a workaround. Enter Replicate. Think of Replicate as the bridge that lets you cross over to the land of large models without having to upgrade your hardware. It’s a platform that lets you tap into Whisper-v3’s power through the cloud. So even if your system’s VRAM is more on the modest side, you can still use Replicate to transcribe audio like a pro.

5 How Can Replicate Help in Using Whisper-v3 Without High VRAM?

To use Whisper-v3 without high VRAM, Replicate provides a user-friendly cloud platform that allows you to run models like Whisper-v3 without worrying about the VRAM limitations of your local hardware. Here’s how Replicate can be the solution you’re looking for:

- Cloud-Based Processing: Replicate runs the Whisper-v3 model on their cloud servers, which means you don’t use your own computer’s resources.

- Accessibility: It makes Whisper-v3 accessible to anyone with an internet connection, without the need for powerful GPUs.

- User-Friendly: Replicate offers a straightforward API to interact with Whisper-v3, simplifying the process for users of all skill levels.

- Cost-Effective: For users who cannot afford the hardware upgrades, Replicate provides a more budget-friendly option.

- Scalability: Whether you need to process one audio file or thousands, Replicate can scale to meet your demands without any additional setup on your part.

6 Step-by-Step Guide: How to Use Whisper-v3 with Replicate

Ready to harness the power of Whisper-v3 for your speech-to-text needs? I’ve crafted a user-friendly guide to help you navigate the process using Replicate, even if you’re not equipped with high VRAM. Plus, I’ve put together a script that you can find in our GitHub repository that automates the process, making it a breeze to transcribe audio files from a specified folder and interactively decide if you want to continue with the next file. Let’s dive into the steps and explore the code together:

Installing the Python Client: Start by installing the Replicate client in your Python environment.

pip install replicate

API Token Authentication: Securely store your API token in a .env file. Use the dotenv library to load this into your script.

import os

from dotenv import load_dotenv

load_dotenv()

Setting Up the Replicate Client: Initialize the Replicate client using the API token from your environment.

client = replicate.Client()

Specifying the Audio Folder Path: Define the path to your folder containing audio files.

audio_folder_path = './audio'

Transcribing an Audio File: Create a function to run the Whisper-v3 model on your audio file using Replicate.

# Function to transcribe an audio file

def transcribe_audio(file_path):

# Running the whisper-large-v3 model on the audio file

output = client.run(

"nateraw/whisper-large-v3:e13f98aa561f28e01abc92a01a4d48d792bea2d8d1a4f9e858098d794f4fe63f",

input={"filepath": open(file_path, "rb")}

)

return output

Interactive User Decision: Implement a function to ask the user if they want to continue to the next file.

def ask_continue():

answer = input("Do you want to process the next audio file? (yes/no): ")

return answer.strip().lower() == "yes"

Processing Audio Files in the Folder: Loop through each file in the specified folder, transcribing them and asking whether to proceed with the next.

def process_audio_files(folder_path):

for filename in os.listdir(folder_path):

# Code to transcribe and ask for continuation

Executing the Main Function: Run your main function to process the audio files.

if __name__ == "__main__":

process_audio_files(audio_folder_path)Explore the Full Code on GitHub

Configuring Translation and Timestamps

Want to take your transcriptions to the next level? Our code is flexible, allowing you to add translation and timestamps easily. If you’re looking to translate your transcriptions to English or include timestamps for each spoken chunk, here’s how you can tweak the code:

-

Translation: To translate the text to English, simply set the

translateparameter toTrue. This feature automatically converts the speech from the source language to English. -

Timestamps: If you need timestamps for each transcribed segment, enable the

return_timestampsparameter by setting it toTrue.

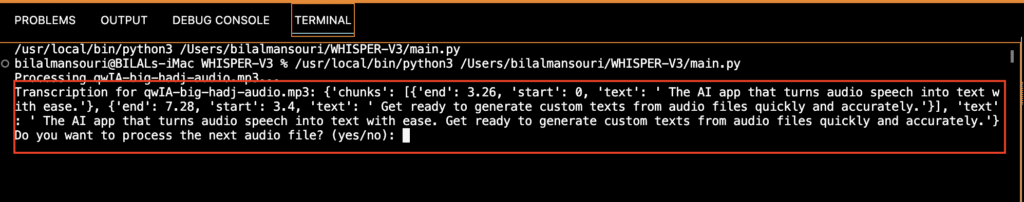

Interpreting the Output

Audio Example for Testing: For our test, we used this Example

This audio clip features a clear, spoken narrative about an AI application’s capabilities in speech-to-text conversion.

Transcription Showcase:

The transcription produced by Whisper-v3 for this audio is as follows:

- “The AI app that turns audio speech into text with ease.Get ready to generate custom texts from audio files quickly and accurately.”

Accuracy Analysis: The transcription accurately captures the content of the audio file. It demonstrates Whisper-v3’s effectiveness in converting spoken words into written text, maintaining both the meaning and the tone of the original speech. The transcription is coherent and free of significant errors, showcasing the model’s precision.

7 Whisper Large-v3 User Feedback: Key Performance Issues

Based on the large-v3 release discussion from OpenAI’s Whisper project, several users have shared their experiences regarding the accuracy and performance of the Whisper v3 model. Key points highlighted by users include:

-

Repetition and Hallucination: Users reported issues with the model repeating sentences or creating hallucinations, particularly in Japanese and Korean. This issue was noted to be more prevalent in v3 compared to v2.

-

Misalignment with Longer Audio: Some users experienced timing issues with longer audio files, where the timestamps became increasingly misaligned as the file progressed.

-

Issues with Punctuation and Capitalization: There were observations that Whisper v3 sometimes fails to accurately transcribe punctuation and capitalization, especially when compared to the v2 model.

-

Varied Performance Across Languages: Users mentioned that Whisper’s performance varies widely depending on the language, with some languages showing better accuracy than others.

-

Model Struggles with Silence/Intermittent Speech: The model still faces challenges in accurately transcribing sections with silence or intermittent speech.

These user experiences indicate certain limitations and areas for improvement in Whisper v3, especially concerning its consistency and accuracy across different languages and audio conditions.

Read More : Elevenlabs Dubbing & Video Translator : Complete Guide

8 Conclusion:

In summary, OpenAI’s Whisper large-v3 emerges as a groundbreaking advancement in speech recognition technology, offering enhanced capabilities over its predecessor, Whisper v2.

With its extensive language coverage, improved error rates, and advanced features like increased Mel frequency bins and Cantonese language support, large-v3 stands out as a highly versatile and efficient tool.

Additionally, its compatibility with platforms like Replicate makes it accessible even to users with limited hardware, democratizing advanced speech-to-text technology.

Despite these advancements, user experiences point out areas needing refinement, such as challenges with repetition, hallucinations in certain languages, timing misalignments in longer audio, and inconsistencies in punctuation and capitalization.

These insights highlight that while Whisper large-v3 is a remarkable step forward, it is still evolving, with room for improvement in accuracy and consistency across varied languages and audio conditions.

Discussion about this post