Text embedding models, particularly Langchain Text Embedding Models, have become a cornerstone in the field of natural language processing (NLP) and artificial intelligence (AI).

These innovative models serve as a critical bridge, connecting the nuanced realm of human language with the numerical world comprehended by machines.

In this article, we will list and explore all the popular text embedding models that integrate with Langchain.

We’ll delve into their unique features and how they enhance the processing and understanding of language within the realm of AI and NLP.

What are Text embeddings in LangChain?

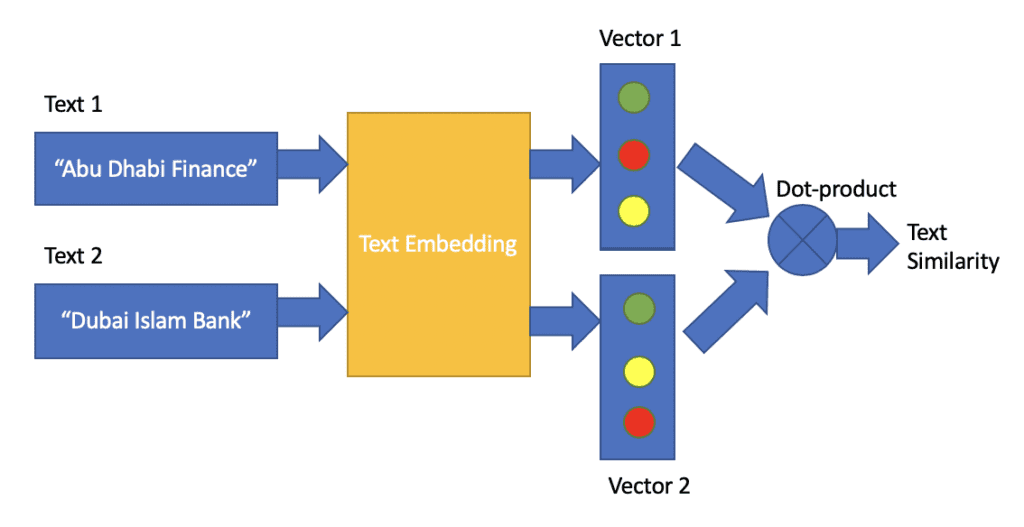

Text embeddings are numerical representations of text (words, phrases, or sentences) used in Natural Language Processing (NLP) to help machines understand human language.

These representations are vectors of real numbers that capture the semantic meaning of the text.

How Do They Work?

Text embeddings are generated by models trained on large text corpora, allowing them to understand contextual relationships between words.

By converting text into dense vectors, these models help machines process and analyze natural language effectively.

They are implemented using APIs from AI platforms, simplifying the creation of embeddings for various applications like semantic search and text classification.

Applications of Text Embeddings

Text embeddings are not just a theoretical construct but have practical applications in various industries. For example, Google Search uses embeddings to match text queries with relevant search results.

The representation of text as embeddings allows for a robust and semantically aware retrieval of information. Beyond search, embeddings are applied in text classification, information retrieval, and measuring semantic similarity, among other use cases .

List of Text Embedding Models integrated with Langchain

1 Aleph Alpha

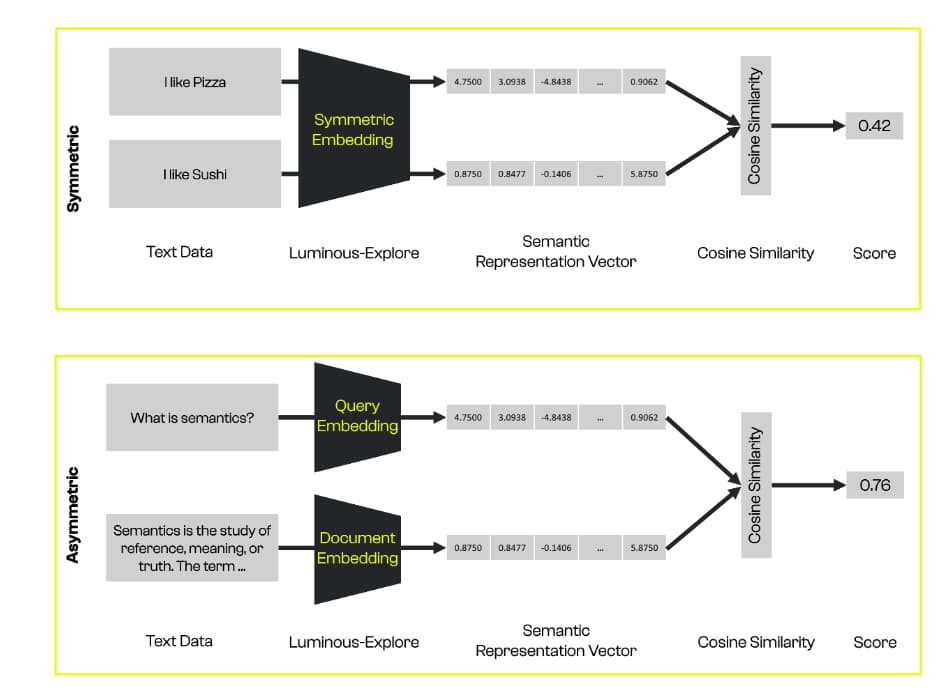

The Aleph Alpha text embedding model offers two distinct approaches for semantic embeddings, depending on the structure of the texts being analyzed:

- Asymmetric Embeddings: This method is ideal for texts with dissimilar structures, such as a document and a query. It helps in understanding and comparing different forms of text.

- Symmetric Embeddings: When dealing with texts that have similar structures, symmetric embeddings are recommended. This approach is useful for analyzing and comparing texts that are structurally alike.

In both cases, Aleph Alpha provides an efficient way to encode and understand the semantic content of texts, making it a versatile tool for various text analysis applications.

2 AwaDB

AwaDB is designed as an AI-native database tailored for the search and storage of embedding vectors, which are crucial in Large Language Model (LLM) applications. This makes AwaDB a key tool in efficiently handling the complexities of embedding vectors in various AI tasks.

Key Features of AwaDB:

- Use of AwaEmbeddings: AwaDB utilizes AwaEmbeddings, a component that enables the embedding of textual data. This is crucial for understanding and processing natural language by converting text into a form that AI models can easily interpret.

- Customizable Embedding Model: With AwaDB, users have the flexibility to set their preferred embedding model using the

Embedding.set_model()function. This feature allows the tailoring of the embedding process to specific needs or preferences. The default model provided isall-mpnet-base-v2, which is a versatile choice for general purposes. - Embedding Queries and Documents: AwaDB provides the functionality to embed both queries and documents. This means it can handle a variety of text inputs, from simple queries to more complex documents, making it adaptable for different use cases in natural language processing.

3 Azure OpenAI

Azure OpenAI is a powerful text embedding model that leverages the capabilities of Azure’s cloud infrastructure in conjunction with OpenAI’s advanced language models. Here’s what you need to know about Azure OpenAI:

- Integration with Azure: Azure OpenAI requires setting up specific environment variables to use Azure endpoints. This integration ensures that you can leverage the robust cloud infrastructure provided by Azure for your text embedding needs.

- Embedding Capabilities: The Azure OpenAI Embedding class allows for embedding queries and documents, transforming text into a format that is more understandable and usable by machine learning models. This is particularly useful for a range of applications like text analysis, natural language understanding, and more.

- Customization and Control: Users can specify the deployment name and API version they want to use, allowing for a degree of customization and control over the embedding process. This is particularly useful for adapting the tool to specific requirements or preferences in different scenarios.

- Legacy Support: For those using older versions of the OpenAI API, Azure OpenAI maintains legacy support, enabling users to continue using the service without having to update their existing systems immediately.

4 Baidu Qianfan

Baidu Qianfan is a comprehensive and versatile platform offered by Baidu AI Cloud. It’s specifically designed as a one-stop solution for enterprise developers focusing on large model development and service operation. Here’s a breakdown of its key features and functionalities:

Key Features of Baidu Qianfan:

- API Initialization: To use Langchain with Baidu Qianfan, you need to initialize certain parameters. This can be done by setting the Access Key (AK) and Secret Key (SK) as environment variables or directly in the code.

- Embedding Functionality: With Langchain, you can utilize Qianfan for embedding purposes. This involves embedding documents and processing queries asynchronously, which is essential for understanding and analyzing textual data efficiently.

- Customization and Flexibility: Baidu Qianfan offers the flexibility to deploy your own model or use the default models. If you wish to use a custom model, you can set up a specific endpoint in the initialization, allowing for a tailored embedding experience.

- Integration with Langchain Embeddings: The integration with Langchain’s

QianfanEmbeddingsEndpointmakes it straightforward to implement and use Baidu Qianfan’s embedding capabilities within the Langchain framework, enhancing the overall efficiency and effectiveness of language model applications.

5 Bedrock

Amazon Bedrock is a cutting-edge, fully managed service that simplifies the integration of powerful foundation models (FMs) into your applications. Here’s a quick rundown of its key features:

- Selection of Foundation Models: Access to a diverse range of high-performance FMs from leading AI companies like AI21 Labs, Anthropic, Cohere, Meta, Stability AI, and Amazon, all through a single API.

- Ease of Experimentation: Effortlessly experiment with and assess the best FMs for your specific needs.

- Customization and Enhancement: Privately customize these models with your data through advanced techniques like fine-tuning and Retrieval Augmented Generation (RAG).

- Task Execution with Enterprise Integration: Build AI agents capable of executing tasks using your enterprise systems and data sources.

- Serverless Infrastructure: With Amazon Bedrock being serverless, you’re relieved from managing any infrastructure, allowing for a more streamlined integration process.

- Secure and Familiar AWS Integration: Securely integrate and deploy AI capabilities within your applications using AWS services you’re already comfortable with.

- Python Integration: Easily embed the Bedrock capabilities in your Python code, allowing you to embed queries and documents asynchronously.

6 BGE on Hugging Face

BGE on Hugging Face provides a top-tier open-source embedding model created by the Beijing Academy of Artificial Intelligence (BAAI). Here’s a concise overview focusing on its embedding capabilities:

Key Features of BGE on Hugging Face:

- Renowned Source: BGE models are developed by BAAI, a respected private non-profit organization in AI research and development.

- Integration with Hugging Face: These models are available on Hugging Face, a popular platform for open-source AI models, making them easily accessible.

- Langchain Compatibility: The

HuggingFaceBgeEmbeddingsclass in Langchain allows for seamless integration and use of BGE models for embedding purposes. - Customizable Model Use: Users can specify the model name, choose the device for running the model (e.g., CPU), and set encoding parameters, including normalizing embeddings for optimal results.

- Efficient Text Embedding: BGE on Hugging Face is designed to efficiently process and embed textual queries, making it suitable for a variety of natural language processing applications.

7 Bookend AI

Bookend AI, integrated with LangChain, offers a specialized class for embeddings, providing a streamlined and effective solution for embedding text data. Here’s an overview of its key features and functionalities:

- Bookend AI Embeddings Class: A dedicated class within LangChain specifically for handling embeddings using Bookend AI. This class is designed to be intuitive and easy to use for embedding tasks.

- Simple Initialization: To use the Bookend AI embeddings, you start by initializing the

BookendEmbeddingsclass with your specific domain, API token, and embeddings model ID. This process is straightforward, ensuring a quick setup. - Embedding Individual Texts: Bookend AI allows for embedding single text inputs, such as sentences or short paragraphs, through the

embed_queryfunction. This feature is particularly useful for analyzing or comparing individual pieces of text. - Batch Embedding of Documents: For handling multiple texts or documents simultaneously, Bookend AI provides the

embed_documentsfunction. This capability is essential for processing larger datasets or comparing multiple documents efficiently.

8 Clarifai

Clarifai’s integration with LangChain for text embedding functions provides a robust and flexible AI solution. Here are the key features and functionalities focusing on this aspect:

- Text Embedding Models: Clarifai offers specialized models for text embedding, making it ideal for tasks involving natural language processing.

- Integration with LangChain: The platform is seamlessly integrated with LangChain, a tool for creating and managing language models. This integration enhances the capabilities of text embeddings.

- Prompt Template Creation: Users can create prompt templates to interact with the LLM (Large Language Models) Chain. This feature allows for customized question and answer formats, tailored to specific needs.

- Model Initialization: The platform allows for easy initialization of text embedding models. Users can either use a specific model ID or a direct model URL, providing flexibility in accessing different model versions or types.

- Embedding Single Text Lines: Clarifai supports embedding single lines of text through the

embed_queryfunction, ideal for processing individual pieces of text or queries. - Embedding Multiple Documents: For more extensive data sets, Clarifai’s

embed_documentsfunction can embed a list of texts or documents, facilitating batch processing of language data.

9 Cloudflare Workers AI

Cloudflare Workers AI, in conjunction with LangChain, provides a powerful platform for running machine learning models, especially for text embedding tasks. Here’s a breakdown of its features and functionalities:

- Integration with Cloudflare Network: Cloudflare Workers AI leverages the extensive Cloudflare network to run machine learning models. This integration allows for high performance and scalability.

- REST API Access: The platform enables you to interact with machine learning models directly from your code via a REST API, offering a flexible and programmable approach.

- Comprehensive Model Availability: Cloudflare documents all available text embedding models, providing a wide range of options for different requirements and applications.

- LangChain Community Integration: The integration with LangChain’s

CloudflareWorkersAIEmbeddingsclass allows for seamless embedding operations. This class is specifically tailored for Cloudflare’s AI capabilities. - Embedding Single Strings: The platform supports embedding single strings through the

embed_queryfunction, useful for analyzing individual text inputs.

10 Cohere

Cohere’s integration with LangChain for text embedding offers a focused and efficient approach to processing and analyzing text data. Here’s a summary of its key features and capabilities:

- Cohere Embedding Class: Cohere provides a specialized embedding class within the LangChain community, designed for efficient text embeddings.

- Model Selection: Users can select from various Cohere models for embedding. In this instance, the example uses

embed-english-light-v3.0, showcasing the flexibility in choosing the model that best fits the task. - Embedding Single Texts: Cohere allows for the embedding of individual text strings through the

embed_queryfunction. This is particularly useful for analyzing or processing single sentences or paragraphs. - Detailed Embedding Outputs: The output from the embedding process is a detailed array of values, offering a nuanced representation of the embedded text. This level of detail is essential for tasks requiring a deep understanding of text features.

11 DashScope

DashScope, with its integration in the LangChain community, offers a specialized embedding class designed for processing and analyzing text data. Here’s a summary of its key features and capabilities:

- DashScope Embedding Class: DashScope provides a specific class for text embeddings within the LangChain community, focused on delivering efficient and effective text embedding capabilities.

- Model Selection: Users can select from DashScope’s models for text embedding.

- API Key Integration: To utilize the DashScope embeddings, users need to input their DashScope API key. This integration ensures secure and authorized access to DashScope’s services.

- Embedding Individual Texts: The

embed_queryfunction allows for embedding a single piece of text. This feature is particularly useful for detailed analysis of individual sentences or paragraphs, making it ideal for applications requiring precise text analysis.

12 DeepInfra

DeepInfra is an advanced serverless platform designed to offer inference as a service, specifically focusing on Large Language Models (LLMs) and embedding models. It is a versatile tool that allows users to easily implement and utilize text embeddings in their projects. This article delves into the process of using LangChain with DeepInfra to achieve efficient and accurate text embeddings.

13 EDEN AI

Eden AI stands out in the AI industry by offering a unified platform that aggregates the best AI providers, thereby unlocking a wide spectrum of AI capabilities. Its user-friendly, all-encompassing platform simplifies AI deployment, making it exceptionally fast and straightforward. The integration of Eden AI with LangChain for embedding models exemplifies this ease of use and versatility, showcasing how users can leverage powerful AI features through a singular API. This synergy enhances the potential of embedding models in various applications.

14 Elasticsearch

Elasticsearch facilitates the generation of embeddings using hosted embedding models. This integration, particularly with the ElasticsearchEmbeddings class from the LangChain community, offers a robust and efficient way to create embeddings for text data.

Top Features of Elasticsearch

- Flexible Instantiation Methods: ElasticsearchEmbeddings class can be instantiated using either Elastic Cloud credentials or a connection to any Elasticsearch cluster, offering flexibility for different user environments and preferences.

- Ease of Setup: The process to set up and use the ElasticsearchEmbeddings class is straightforward, involving simple installation of required packages and basic configuration steps. This ease of setup is ideal for both beginners and experienced users.

- Support for Multiple Document Embeddings: Users can generate embeddings for multiple documents simultaneously, a feature that greatly enhances efficiency and scalability in processing large volumes of text data.

15 Embaas

Embaas is a comprehensive NLP API service focused on embedding generation. It allows users to access a variety of pre-trained models for creating embeddings from text documents, ideal for applications in text analysis and natural language processing. Integrated with LangChain, Embaas provides an efficient solution for transforming text into meaningful, actionable data representations.

Key Features:

- Embedding Generation: Facilitates the creation of embeddings for individual and multiple documents.

- Variety of Pre-Trained Models: Offers a selection of pre-trained models to cater to different embedding requirements.

- Integration with LangChain: Enhances text embedding capabilities through seamless integration with LangChain.

- User-Friendly API Access: Simplifies access to embedding features through an easy-to-use API.

- Customizable Model Options: Allows users to select different models and custom instructions for tailored embedding generation

16 FastEmbed by Qdrant

FastEmbed by Qdrant is a streamlined and efficient Python library designed for rapid embedding generation. It stands out for its lightweight structure and CPU-focused design, making it highly suitable for handling large datasets. The integration with LangChain further enhances its capability, allowing for effective use in various NLP and data analysis applications.

Key Features:

- Quantized Model Weights: Utilizes optimized model weights for faster processing and reduced memory usage, ideal for embedding generation.

- ONNX Runtime Support: Operates on ONNX Runtime, eliminating the dependency on PyTorch, which contributes to its lightweight and efficient nature.

- CPU-First Design: Engineered with a focus on CPU processing, ensuring optimal performance even without specialized hardware.

- Data-Parallelism: Capable of encoding large datasets in parallel, significantly boosting the speed and efficiency of embedding generation.

- Customizable Parameters: Offers several adjustable parameters, such as model name, maximum token length, cache directory, and thread usage, allowing users to tailor the embedding process to their specific needs.

17 Google Generative AI Embeddings

Google Generative AI Embeddings service, accessible via the GoogleGenerativeAIEmbeddings class from the langchain-google-genai package, offers a sophisticated solution for generating text embeddings. This service leverages Google’s advanced AI models, enabling users to produce high-quality embeddings for various NLP tasks. The integration with LangChain enhances its functionality, providing an efficient and user-friendly approach to embedding generation.

Key Features:

- Easy Installation and Setup: The service can be easily set up with a simple pip installation command and requires a Google API key for access, ensuring a straightforward start for users.

- Flexible Embedding Options: Users can generate embeddings for individual queries as well as batch process multiple strings at once, increasing efficiency for larger datasets.

- Task-Specific Embedding Customization: The service supports different task types, including retrieval_query, retrieval_document, semantic_similarity, classification, and clustering. This feature allows users to optimize embeddings based on the specific nature of their task.

- High-Quality Embeddings: Leveraging Google’s advanced generative AI models, the service provides high-quality embeddings, suitable for a wide range of applications such as semantic analysis and document retrieval.

- Scalable and Efficient: With the ability to handle both individual and batch processing, the service is scalable and efficient, catering to both small-scale and large-scale embedding needs.

18 Google Vertex AI PaLM

Google Vertex AI PaLM is a service offered by Google Cloud, providing access to advanced embedding models. This API is distinct from the general Google PaLM integration and aligns with Google Cloud’s AI/ML Privacy Commitment. Vertex AI PaLM is designed for users who require high-quality embeddings and is particularly useful for those working within the Google Cloud environment.

Key Features:

- Access to Advanced Embedding Models: Vertex AI PaLM exposes sophisticated embedding models, allowing users to generate high-quality embeddings for a variety of text-based applications.

- Privacy and Data Security Commitment: In line with Google Cloud’s AI/ML Privacy Commitment, the service ensures that customer data is not used to train foundation models, providing assurance regarding data privacy and security.

- Flexible Authentication Options: The service supports different authentication methods, including environment-based credentials and service account JSON files, catering to various user setups and preferences.

- Simple Installation and Setup: Users can easily install the necessary Google Cloud AI platform package and get started with the Vertex AI PaLM API, ensuring a user-friendly experience.

19 GPT4All

GPT4All is an innovative, free-to-use, locally running chatbot that prioritizes user privacy. It operates independently of the internet and GPU, making it accessible for a wide range of users. GPT4All features popular models along with its unique offerings like GPT4All Falcon and Wizard. This tool is particularly notable for its integration with LangChain, enabling the generation of embeddings from text data.

Key Features:

- Privacy-Aware and Local Operation: GPT4All runs locally and doesn’t require an internet connection, offering a high degree of privacy and security for user data.

- No GPU Requirement: It functions efficiently without the need for a GPU, making it accessible for users with varying hardware capabilities.

- Diverse Range of Models: Includes both popular and proprietary models like GPT4All Falcon and Wizard, providing users with a variety of options for their embedding needs.

- Easy Installation of Python Bindings: The installation process is straightforward, involving a simple pip command, thus ensuring ease of setup and use.

- Embedding Generation for Individual and Multiple Texts: Users can generate embeddings for both individual queries and multiple documents, enhancing the tool’s versatility for different NLP tasks.

20 Gradient

Gradient is a versatile web API that enables the creation of embeddings, fine-tuning, and obtaining completions on Large Language Models (LLMs). It is particularly noted for its integration with LangChain, which enhances its capabilities in generating embeddings. Gradient offers a user-friendly interface and a straightforward API, making it a viable choice for various NLP tasks.

Key Features:

- Embedding Generation and LLM Fine-Tuning: Offers the ability to create embeddings and fine-tune LLMs, providing users with a range of options for text analysis and language model optimization.

- Simple Web API Interface: The web API is designed for ease of use, allowing users to quickly set up and start generating embeddings or working with LLMs.

- Environment-Friendly API Key Setup: Users can set their API key and workspace ID as environment variables, ensuring secure and convenient access to Gradient’s features.

- Initial Credit Offering: Gradient provides $10 in free credits, enabling users to test and fine-tune different models without immediate cost.

21 Hugging Face

Hugging Face offers an advanced embedding class that is easily accessible and integrates seamlessly with LangChain. This service is known for its robust and extensive library of pre-trained models and tools for natural language processing (NLP). Hugging Face’s approach simplifies the process of generating high-quality embeddings for text data, making it a preferred choice for a variety of NLP tasks.

Key Features:

- Easy Integration with LangChain: Hugging Face’s embedding class integrates smoothly with LangChain, offering an enhanced experience for generating text embeddings.

- Simple Installation Process: The required packages, including langchain and sentence_transformers, can be installed via a straightforward pip command, facilitating an easy setup.

- Local and API-Based Embedding Generation: Users can generate embeddings either by installing models locally or by accessing models via the Hugging Face Inference API, providing flexibility in terms of resource usage and convenience.

- Access to a Wide Range of Pre-Trained Models: Hugging Face is renowned for its extensive library of pre-trained models, allowing users to choose the most suitable model for their specific embedding needs.

- Efficient Handling of Both Single and Multiple Text Inputs: The service supports embedding generation for individual queries as well as batches of documents, making it adaptable for various scales of NLP projects

22 Infinity

Infinity is a versatile tool that offers the ability to create embeddings using an MIT-licensed Embedding Server. It is designed to work in conjunction with LangChain, providing a powerful means of generating embeddings. This tool is especially notable for its flexibility and ease of use, with a focus on integrating with the Infinity GitHub Project for embedding generation.

Key Features:

- MIT-Licensed Embedding Server: Infinity provides an open-source, MIT-licensed server for embedding generation, promoting accessibility and customization for users.

- Easy Installation and Setup: The installation process for Infinity is straightforward, with detailed guidance available on GitHub, ensuring a smooth setup experience.

- Compatibility with Popular Models: Infinity supports well-known models such as ‘sentence-transformers/all-MiniLM-L6-v2’, allowing users to leverage reliable and tested models for their embedding needs.

- Flexible Deployment Options: Users can start the Infinity server using a standard command or through Docker, offering flexibility in terms of deployment and environment setup.

- Effective Document Embedding and Similarity Computation: Infinity allows for embedding multiple documents and efficiently computes similarity scores, making it useful for a range of applications from semantic analysis to document retrieval.

23 Jina

Jina is a modern framework that facilitates the creation of embeddings using its unique Jina Embedding class. Integrated with LangChain, Jina provides a streamlined approach to generating high-quality text embeddings. This tool is particularly useful for developers and data scientists who require efficient and scalable solutions for text processing and embedding generation.

Key Features:

- User-Friendly API Key Setup: The setup process for Jina is straightforward, requiring only an API key for access, which simplifies the initialization and use of the embedding services.

- Support for Popular Models: Jina supports various embedding models, including “jina-embeddings-v2-base-en,” providing users with reliable and effective options for their embedding tasks.

- Efficient Text Embedding: The Jina Embedding class is designed for efficient processing of text, enabling both individual query embeddings and batch document embeddings.

- Simplicity and Ease of Use: The simplicity of the Jina framework, combined with its powerful embedding capabilities, makes it accessible and easy to use for a wide range of users, from beginners to advanced practitioners.

24 John Snow Labs

John Snow Labs offers a comprehensive NLP & LLM ecosystem, featuring state-of-the-art AI tools for a wide range of applications, including Healthcare, Legal, and Finance sectors. Their platform provides access to over 20,000 models and includes functionalities like Responsible AI and No-Code AI. The integration with LangChain for embedding generation makes John Snow Labs a robust choice for complex NLP tasks.

Key Features:

- Extensive Model Library: Access to over 20,000 models for various domains, allowing users to select the most suitable one for their specific requirements.

- Simple Setup Process: Easy installation with a pip command, and an option for enterprise users to install additional features.

- Integration with LangChain for Embeddings: The JohnSnowLabsEmbeddings class, combined with LangChain, facilitates efficient generation of text embeddings for diverse applications.

- Versatile Embedding Generation: Ability to generate embeddings for both individual texts and multiple documents, catering to a wide range of NLP tasks from document similarity to text classification.

- High-Quality Embeddings: The embeddings generated by John Snow Labs are numerical representations that accurately capture the content and context of the input texts, making them highly effective for advanced NLP applications.

25 Llama-cpp

Llama-cpp is a specialized tool designed to work within the LangChain framework for generating text embeddings. It offers a streamlined approach to embedding text data, making it suitable for various NLP applications.

Key Features:

- Easy Installation: The tool can be easily installed with a simple pip command, ensuring a quick and hassle-free setup process.

- Custom Model Path Configuration: Users can specify the path to their model, offering flexibility in terms of model selection and usage.

- Efficient Text Embedding: Capable of generating embeddings for both individual queries and multiple documents, Llama-cpp is adaptable to different scales and types of text embedding tasks.

26 LLMRails

LLMRails Embeddings is a versatile tool designed for generating text embeddings, offering an efficient and user-friendly solution for a wide range of natural language processing tasks. It requires an API key, which users can obtain by signing up on the LLMRails platform, ensuring secure and personalized access.

Key Features:

- Simple API Key Setup: Users can easily obtain an API key from the LLMRails platform, ensuring secure access to the embedding services.

- Multiple Model Options: LLMRails provides options like “embedding-english-v1” and “embedding-multi-v1,” catering to different language and embedding requirements.

- Flexibility in Text Input: Capable of generating embeddings for both individual queries and multiple documents, making it adaptable for various types of text processing tasks.

- Efficient and Accurate Embeddings: The embeddings produced are numerical representations that accurately capture the essence of the input text, making them suitable for advanced NLP applications.

27 LocalAI

LocalAI offers a unique embedding class that is particularly designed for local hosting and configuration. It provides a customizable and self-hosted solution for generating text embeddings, making it ideal for environments where data privacy and local processing are priorities.

LocalAI offers a unique embedding class that is particularly designed for local hosting and configuration. It provides a customizable and self-hosted solution for generating text embeddings, making it ideal for environments where data privacy and local processing are priorities.

Key Features:

- Self-Hosted Service: LocalAI requires users to host the LocalAI service locally, offering a high degree of data privacy and security.

- Customizable Embedding Models: Users have the flexibility to configure their own embedding models according to their specific requirements.

- Support for Various Model Generations: LocalAI supports both first-generation models and more advanced options, catering to a wide range of embedding needs.

- Proxy Support for Connectivity: LocalAI provides the option to configure proxy settings, allowing users in restricted network environments to access the service without hassle.

28 MiniMax

MiniMax is a service that offers text embedding capabilities, specifically designed to integrate with LangChain for enhanced text embedding interaction. It provides a straightforward approach for both individual queries and document embeddings, catering to various NLP applications.

Key Features:

- Simple Environment Variable Setup: Users can easily set up the service by configuring the necessary environment variables, such as group ID and API key, ensuring secure access.

- Versatile Text Embedding: MiniMax supports embedding for both single queries and multiple documents, making it versatile for different types of text analysis tasks.

- Cosine Similarity Computation: The service includes the functionality to calculate cosine similarity, a useful metric for assessing the similarity between documents and queries.

- Ease of Use: With its straightforward setup and integration, MiniMax provides a user-friendly experience for generating high-quality text embeddings.

29 ModelScope

ModelScope stands out as a vast repository of models and datasets, offering a specialized Embedding class that integrates seamlessly with LangChain. This platform is tailored for users seeking access to a wide range of models.

Key Features:

- Flexible Model Selection: Users have the flexibility to choose different models, like “damo/nlp_corom_sentence-embedding_english-base,” allowing for tailored embedding solutions.

- Support for Various Text Inputs: ModelScope can generate embeddings for both individual queries and batches of documents, catering to a range of NLP tasks.

- User-Friendly Experience: With its straightforward implementation and integration, ModelScope offers an accessible and efficient approach to text embedding.

30 MosaicML

MosaicML offers a managed inference service, allowing users to utilize a range of open-source models or deploy their own for text embedding tasks. This service, particularly in conjunction with LangChain, provides a flexible and powerful solution for generating text embeddings.

Key Features:

- Managed Inference Service: MosaicML provides a managed platform for inference, simplifying the process of embedding generation for users.

- Variety of Model Options: Users have the option to choose from various open-source models or deploy custom models, offering versatility in embedding solutions.

- Customizable Embedding Instructions: Users can specify embedding instructions, allowing for tailored embedding processes based on the specific needs of their query or document.

- Cosine Similarity Calculation: The service includes the ability to calculate cosine similarity, an important metric for assessing the relationship between text inputs.

31 NLP Cloud

NLP Cloud is an advanced artificial intelligence platform that provides access to cutting-edge AI engines, including the capability to train engines with custom data. It offers a specialized embeddings endpoint that supports a range of languages, making it ideal for multilingual text embedding tasks.

Key Features:

- Access to Advanced AI Engines: NLP Cloud enables users to leverage some of the most advanced AI engines available, enhancing the quality and accuracy of text embeddings.

- Support for Multilingual Embedding Model: The platform offers the “paraphrase-multilingual-mpnet-base-v2” model, which is well-suited for embeddings extraction in over 50 languages.

- Flexibility in Text Processing: NLP Cloud can handle embedding generation for both individual queries and multiple documents, catering to diverse NLP applications.

- User-Friendly Setup: With a simple pip install command and API key setup, NLP Cloud offers an accessible and straightforward experience for users.

32 NVIDIA AI Foundation Endpoints

NVIDIA AI Foundation Endpoints provide access to advanced AI models like Mixtral 8x7B and Llama 2, optimized for performance on the NVIDIA platform. These models, available through NVIDIA’s hosted API endpoints, are designed for efficient evaluation, customization, and deployment in any accelerated environment.

Key Features:

- Access to High-Performance Models: Offers a range of NVIDIA AI Foundation Models, optimized and hosted for peak performance on NVIDIA’s platform.

- Customizable and Accelerated Solutions: Users can quickly customize and deploy models on the NVIDIA DGX Cloud for accelerated performance.

- Enterprise-Grade Security and Support: Ensures enterprise-grade security, stability, and support when deploying customized models.

- Efficient Embedding Generation: Provides fast and effective embedding solutions for both queries and documents, suitable for retrieval-augmented generation tasks.

33 Ollama

Ollama provides a platform to work with large language models locally, offering a range of models like Mistral, Llama2, Codellama, Mixtral, Zephyr, and Wizardcoder. It’s designed for users who prefer local deployment of language models for embedding generation.

Key Features:

- Local Deployment of Large Language Models: Ollama allows users to run advanced language models locally, offering privacy and control over the embedding process.

- Variety of Models: Supports various models, including smaller ones like “llama2:7b”, giving users the flexibility to choose according to their specific requirements.

- Versatile Embedding Options: Capable of generating embeddings for both individual queries and multiple documents, making it versatile for different types of text analysis.

- Easy Accessibility to Models: Users can easily access different models through Ollama’s library, ensuring a wide range of options for embedding generation.

34 OpenClip

OpenClip, an open-source implementation of OpenAI’s CLIP, provides multi-modal embedding capabilities, enabling the embedding of both images and text. This tool is designed for users who need to generate embeddings for diverse data types using advanced AI models.

Key Features:

- Multi-Modal Embedding Capabilities: OpenClip can embed both images and text, making it a versatile tool for various applications.

- Support for Different Models: Offers the flexibility to choose between larger, more performant models and smaller, less performant ones, such as “ViT-g-14” and “ViT-B-32”.

- Customizable Model Selection: Users can specify model names and checkpoints, allowing for tailored embedding processes based on specific requirements.

- Efficient Similarity Checks: Capable of performing sanity checks and similarity calculations, OpenClip is suitable for tasks that require comparing text and image features.

35 OpenAI

OpenAI Embeddings offer a robust solution for text embedding, integrating with LangChain to provide efficient and high-quality embeddings. This tool is designed for those seeking advanced embedding capabilities using OpenAI’s state-of-the-art models.

Key Features:

- Flexibility in Model Selection: Users have the option to select from various models, including first-generation models like “text-embedding-ada-002”, providing flexibility for specific embedding needs.

- Efficient Text Embedding: Capable of generating embeddings for both individual queries and multiple documents, making it versatile for different types of text analysis tasks.

- Proxy Support for Connectivity: Includes the option to configure proxy settings, allowing users in restricted network environments to access the service conveniently.

- Consistent Embedding Results: Delivers high-quality and consistent embeddings, suitable for a wide range of NLP applications.

Read More :

What Language Does LangChain Use?

Explaining OpenAI New Embedding Models

conclusion:

Langchain Text Embedding Models offer a diverse and powerful toolkit for transforming human language into numerical representations understandable by machines.

From local deployment options to advanced AI engines and multi-modal capabilities, these models cater to a wide range of needs in the NLP and AI fields.

Their integration with LangChain enhances their ease of use and flexibility, making them indispensable tools for professionals seeking efficient and accurate text analysis solutions.

As we continue to advance in AI and NLP, the role of these embedding models will undoubtedly grow, further bridging the gap between human language and machine understanding.

Discussion about this post