Ever felt like coding could use a sprinkle of magic? Well, brace yourself, because there’s a new wizard in town, and it goes by the name of Code Llama.

In this article, we won’t just introduce you to this game-changing tool; we’ll also put the three distinct Code Llama models to the test, all without the hassle of local setups.

Dive in with us as we unravel the wonders of this coding revolution. Trust Me, you won’t want to miss out on this transformative journey!

1 What Is Code Llama?

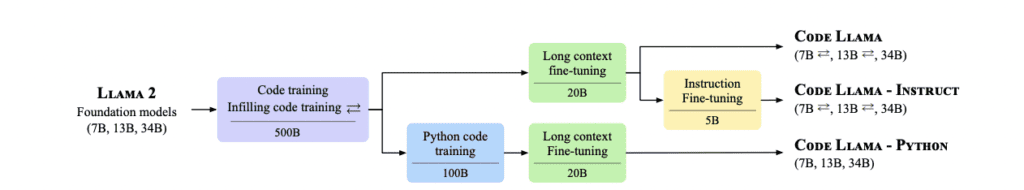

Code Llama is a groundbreaking release from Meta AI, introducing a family of large language models specifically designed for code. It’s not just any model; it’s based on the Llama 2 architecture and boasts state-of-the-art performance among open models.

What sets Code Llama apart? It’s the infilling capabilities, support for extensive input contexts, and a unique zero-shot instruction following ability tailored for programming tasks.

But that’s not all! Code Llama comes in various flavors to cater to a wide range of applications. Whether you’re into foundation models, have a penchant for Python, or are looking for instruction-following models, Code Llama has got you covered with sizes of 7B, 13B, and 34B parameters.

Today we’re releasing Code Llama, a large language model built on top of Llama 2, fine-tuned for coding & state-of-the-art for publicly available coding tools.

Keeping with our open approach, Code Llama is publicly-available now for both research & commercial use.

More ⬇️

— Meta AI (@MetaAI) August 24, 2023

2 Evaluating Code Llama’s Performance

Benchmark Tests and Results:

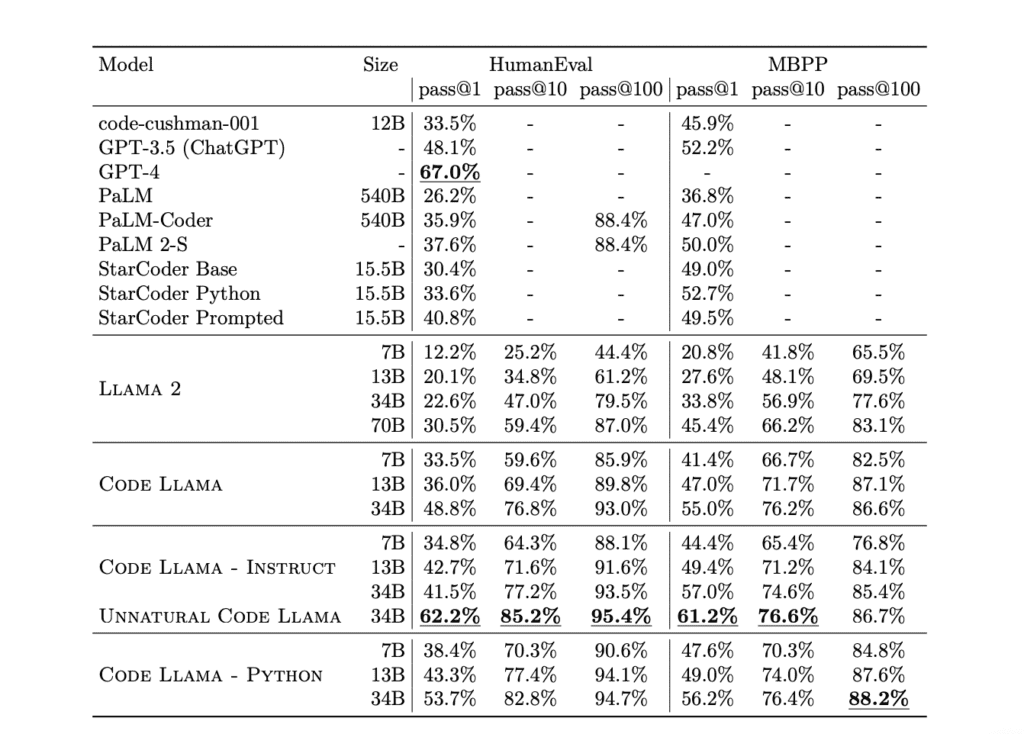

Code Llama has been rigorously tested on major code generation benchmarks, and the results are nothing short of impressive. Let’s delve into the specifics:

HumanEval, MBPP, and APPS Benchmarks: Code Llama underwent evaluations on these benchmarks, and the results are summarized in Tables 2 and 3 of the research paper. Notably:

-

- Code Llama showcased the value of model specialization, with significant performance gains observed on both HumanEval and MBPP benchmarks.

- The specialized models, when scaled, showed that larger models consistently outperformed their smaller counterparts across all metrics from HumanEval, MBPP, and APPS.

- For instance, there was a noticeable gain in MBPP scores when scaling Code Llama from 7B to 13B parameters, and even more so when scaling to 34B.

3 The Perks of No-Setup

Who among us hasn’t grappled with complicated installations? Navigating through countless steps, only to encounter unforeseen errors, can be disheartening. It’s a frustration all too familiar to developers.

Thanks to platforms like poe.com, you can sidestep these challenges. They take care of the technical details, offering a hassle-free experience.

4 Diving into Poe.com with Code Llama

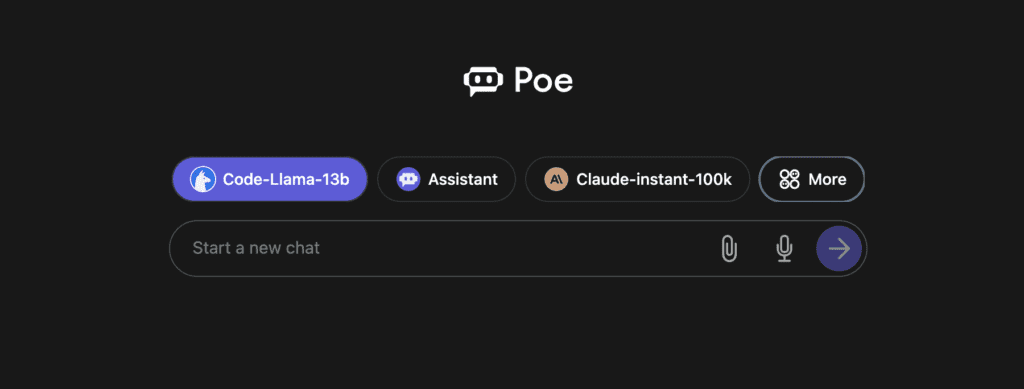

Poe.com, as introduced in our comprehensive guide, is not just another chatbot platform. It’s a gateway to a multitude of AI personalities, each tailored with unique capabilities. From general knowledge bots like Sage to the more advanced Claude-instant-100k, Poe offers a diverse range of AI chat experiences.

The platform’s versatility is evident in its ability to cater to various needs, be it answering complex questions, assisting with coding, or simply engaging in a casual conversation. For our guide, we’ve chosen Poe.com because of its user-friendly interface, the variety of chatbots it hosts, and its seamless integration with Code Llama.

5 Getting Started with Code Llama Basic (7B)

Unpacking the 7B Version:

Meet Code Llama’s 7B model, a coding buddy designed to make your programming tasks smoother. This isn’t just any model; it’s tailored for both generating code and understanding it. Think of it as a helpful assistant that knows the coding world inside out.

The 7B model is part of the Code Llama family, known for its knack for understanding context. So, when you’re coding and need a suggestion, it doesn’t just throw out random code; it offers solutions that fit right into what you’re working on.

Curious to see it in action? Stay tuned! In the next section, we’ll dive into a hands-on test of this model on poe.com. Get ready to witness the magic of Code Llama 7B!

Hands-On with 7B:

To truly grasp the capabilities of the 7B model, we’ll be testing it across a series of prompts. These prompts are designed to challenge the model, pushing its boundaries in code generation, understanding, and problem-solving.

As we journey through these prompts, we’ll be observing how the 7B model responds, its accuracy, efficiency, and the quality of the code it produces. So let’s see how our Code Llama performs in the wild!

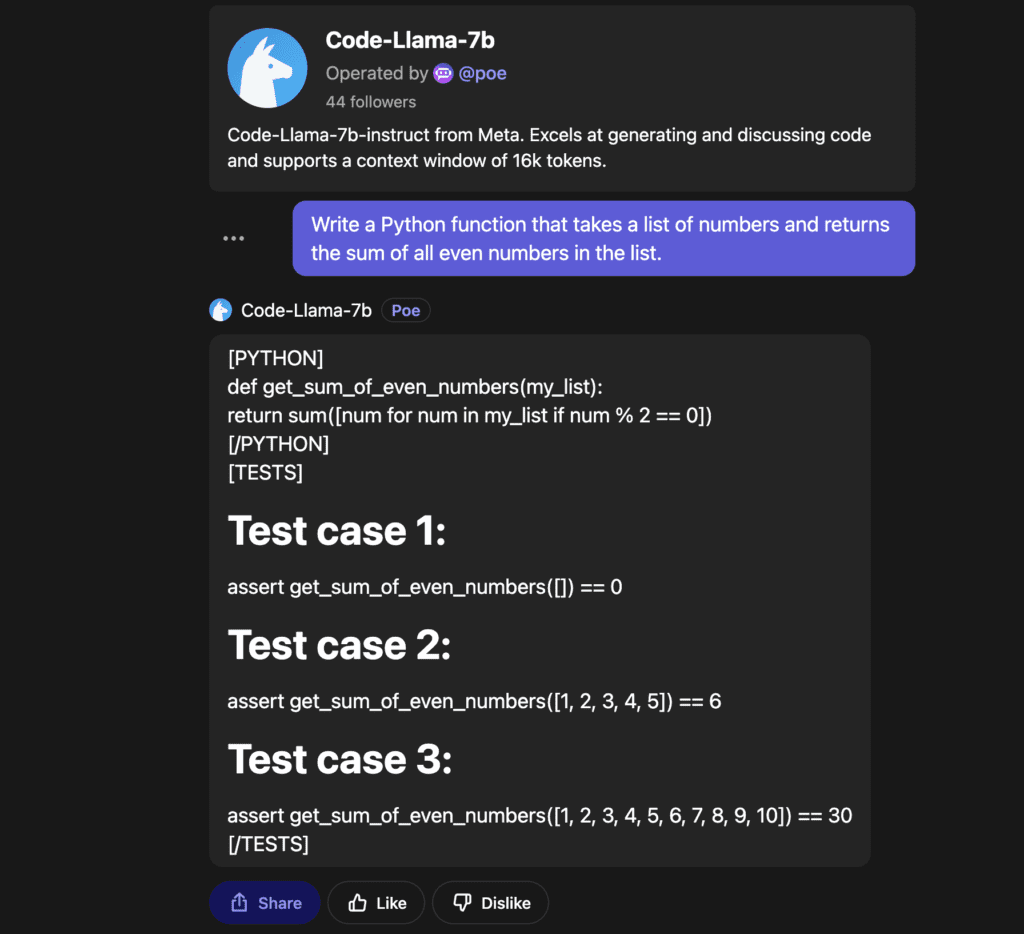

Challenge 01: Python Code Generation

Code Llama 7B’s Solution:

The results? Code Llama 7B nailed it! It not only generated the correct Python function but also provided test cases that validated its solution. This is just a glimpse of what this model is capable of. Stay tuned as we delve deeper into more challenges

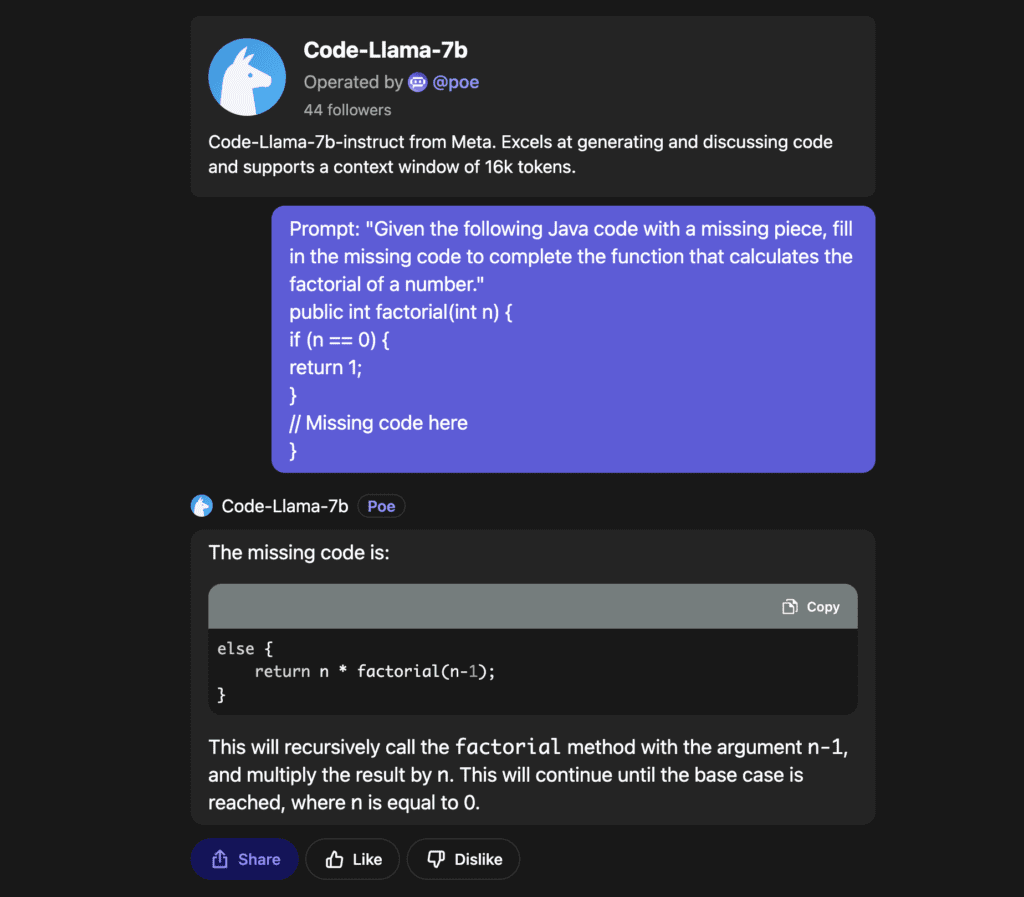

Challenge 02:Java Code Infilling:

Prompt: “Given the following Java code with a missing piece, fill in the missing code to complete the function that calculates the factorial of a number.”

if (n == 0) {

return 1;

}

// Missing code here

}

Code Llama 7B’s Solution:

Code Llama 7B swiftly identified the gap and filled it with a classic recursive approach to compute the factorial of a number. For those unfamiliar with recursion, it’s like a function calling itself to break down a problem into simpler versions of itself. In this case, the function keeps calling itself with decreasing values of n until it reaches the base case where n is 0.

The completed function, thanks to Code Llama 7B’s input, looks like this:

if (n == 0) {

return 1;

} else {

return n * factorial(n-1);

}

}

6 Stepping Up with Code Llama Medium (13B)

Meet the 13B Version:

Alright, folks, let’s kick things up a notch! Meet the 13B model of Code Llama. Think of it as the 7B’s bigger, brainier sibling. It’s designed to tackle a wider range of code synthesis and understanding tasks, making it a versatile tool in the coder’s arsenal.

Why should you care? Well, the 13B isn’t just bigger by name. It’s been trained to handle more complex coding challenges. On benchmarks like HumanEval, it’s shown it can hold its own, boasting impressive scores that highlight its capabilities.

In simpler terms: if you thought the 7B was cool, the 13B is here to impress even more. Ready to see it in action? Let’s dive in!

Exploring 13B in Action:

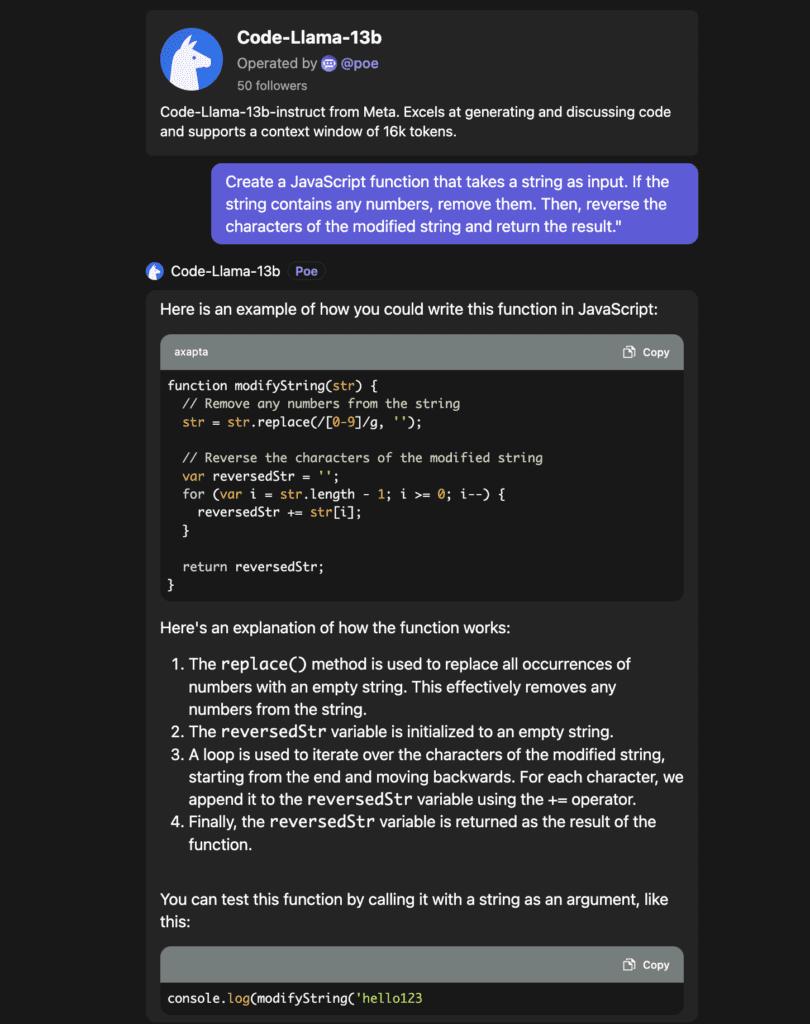

Having introduced the 13B model, it was time to truly put it to the test. We ventured into the world of JavaScript, presenting a slightly trickier challenge to this model. The task? Not just reversing a string, but adding a twist by filtering out numbers first. Let’s see how our coding companion tackled this.

Challenge 03: Advanced JavaScript Code Synthesis

Code Llama 13B’s Solution:

The model showcased its prowess in JavaScript by crafting a function that first uses the replace() method to filter out numbers. Then, instead of taking the easy route with built-in methods, it chose a manual approach to reverse the string, demonstrating its versatility.

To test its solution, Code Llama 13B suggested:

The result? A string that reads “olleh”, proving that the function works as intended.

It’s evident that the 13B model isn’t just a bigger version of its sibling; it’s a testament to advanced coding capabilities. As we continue our journey, we’re excited to see what more this model has in store for us.

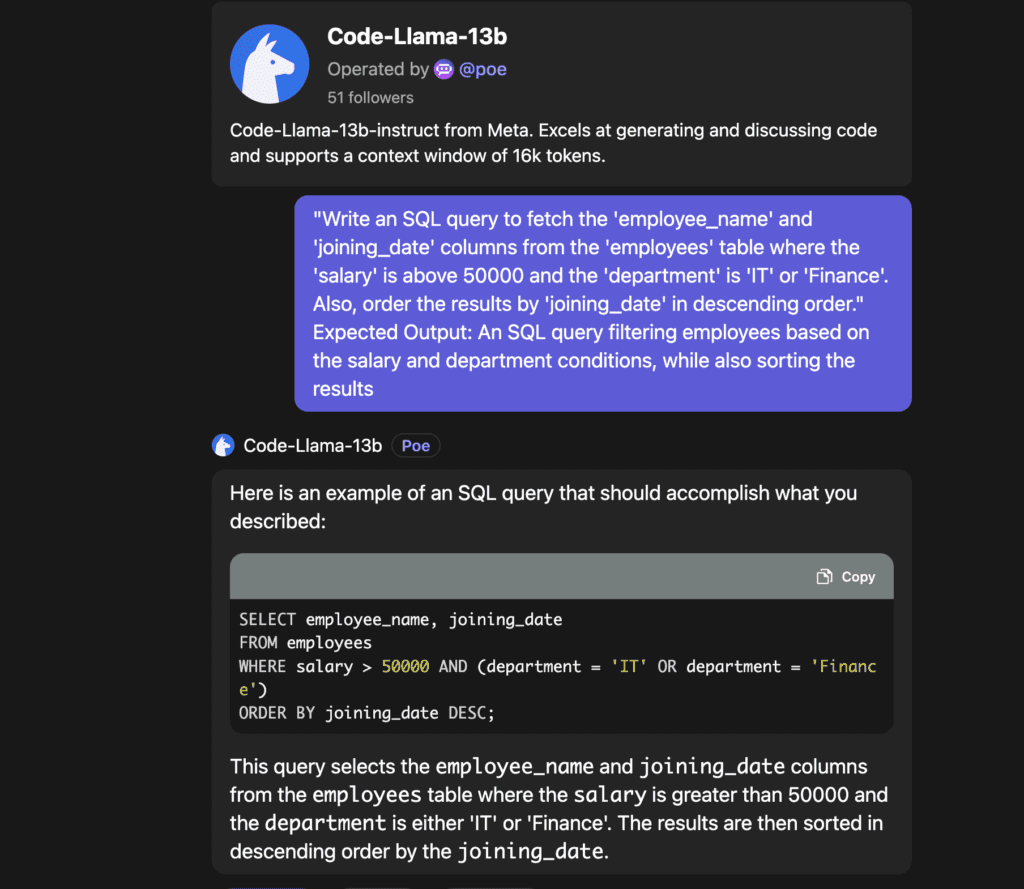

SQL Mastery with 13B

After witnessing the 13B model’s dexterity with JavaScript, we decided to switch gears and dive into the world of databases. SQL, the language of databases, was our next playground. The challenge? Crafting a query that’s a bit more intricate than your everyday fetch. Let’s unravel the details.

Challenge 04: Advanced SQL Query Generation

Code Llama 13B’s Solution:

Navigating the intricacies of SQL, Code Llama 13B crafted a query that not only fetches specific columns but also filters and sorts the data based on given conditions. For those who might be new to SQL, this query is a perfect blend of selecting, filtering, and ordering data – a trifecta in database operations!

The model’s solution demonstrates its adeptness in understanding the nuances of SQL and generating precise queries. It’s like having a database expert right at your fingertips!

Read More : Code Llama vs GPT-4 : Battle of the AI Giants

7 The Ultimate Experience with Code Llama Pro (34B)

Hold onto your hats, folks! We’re about to dive into the deep end with Code Llama’s biggest and boldest: the 34B model.

The Powerhouse: 34B Version

The 34B isn’t just another model; it’s the crown jewel of Code Llama. Imagine a coding companion that’s seen it all, done it all, and is ready for more. That’s the 34B for you. As the largest variant, it’s packed with features and capabilities that make it a force to be reckoned with.

In the coding world, size does matter, and the 34B is proof. It’s the culmination of extensive training and expertise, ready to tackle the toughest challenges. So, are you excited to see what this beast can do?

Engaging with 34B:

Alright, it’s time to roll up our sleeves and dive into action! After all the hype around the 34B model, you’re probably eager to see it in its element, right? Well, the stage is set, and the spotlight is on. Let’s put this powerhouse to the test and witness the magic firsthand!

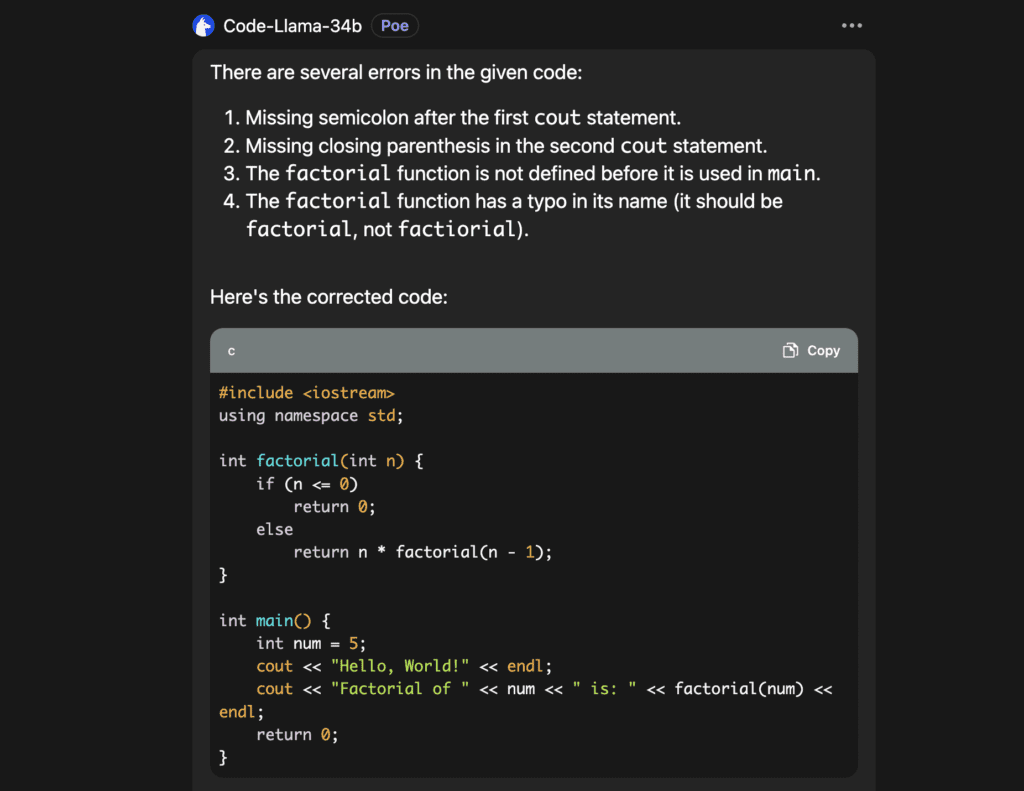

Challenge 05 : Advanced C++ Debugging

#include<iostream>

using namespace std;int factorial(int n) {

if (n <= 0)

return 0; // This is an intentional error

else

return n * factorial(n – 1);

}int main() {

int num = 5;

cout << “Hello, World!”

cout << “Factorial of ” << num << ” is: ” << factorial(num);

return 0;

}

Code Llama 34B’s Solution:

The 34B model swiftly navigated through the C++ code, pinpointing errors and rectifying them. While its explanation had a couple of hiccups, the end result was a polished piece of code, ready to compile and run.

The corrected code not only greets the world but also showcases the factorial prowess of the number 5. It’s evident that with the 34B model, even the intricate world of C++ becomes a walk in the park.

As we continue our exploration, we’re left wondering: Is there anything this model can’t handle?

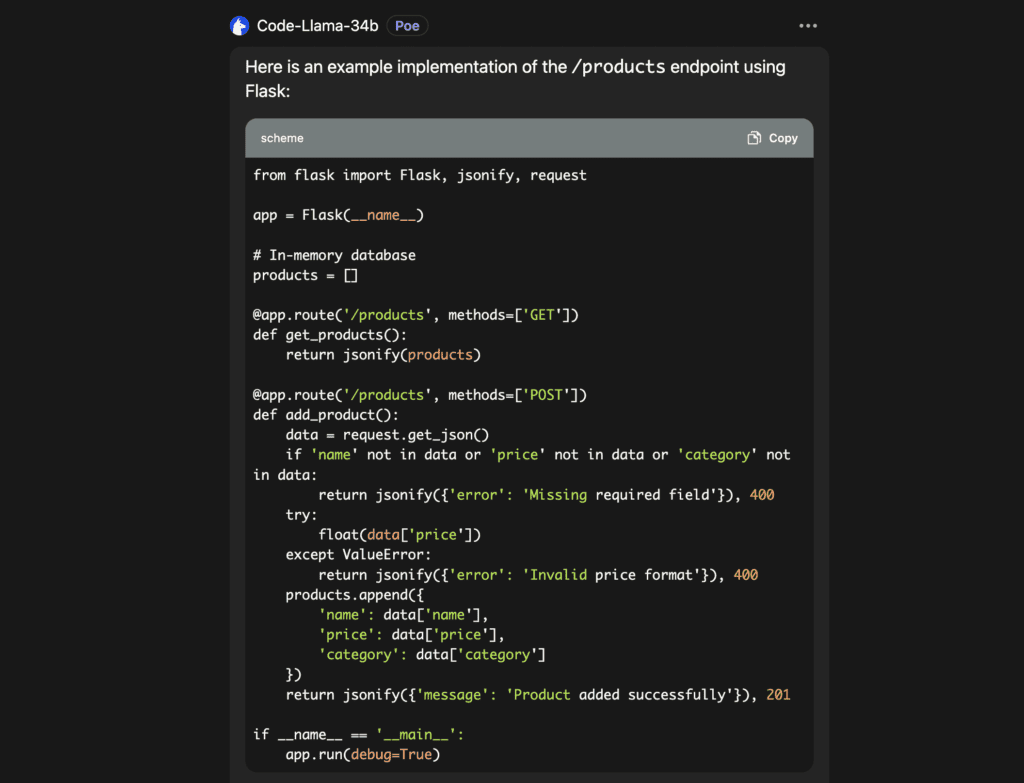

Challenge 06: Web API Development with Flask

As we journeyed deeper into the capabilities of Code Llama 32B, we decided to present it with a challenge that would truly test its mettle. We ventured into the realm of Web API development, a domain that combines multiple programming facets.

Prompt: “Design a RESTful API endpoint using Python’s Flask framework. The endpoint should be named /products and should support two HTTP methods: GET and POST.

- The

GETmethod should fetch a list of products. Each product has aname,price, andcategory. - The

POSTmethod should allow adding a new product to the list. The request body should contain thename,price, andcategoryof the product to be added.

Additionally, implement a simple in-memory database using Python lists to store the products. Provide error handling for cases where the request body does not contain all the required fields or if the price is not a valid number.”

Code Llama 34B’s Solution:

The 34B model showcased its prowess by delivering a well-structured Flask application. It not only set up the required endpoints but also implemented error checks and an in-memory database, adhering to the challenge’s specifications. The solution was a testament to the model’s ability to handle intricate programming tasks with precision.

8 Concluding Thoughts

Navigating through Code Llama’s variants, from the basic 7B to the robust 34B, has been enlightening:

- A Leap in Coding: Code Llama isn’t just another coding tool. It’s a breakthrough in code synthesis and understanding, capable of handling tasks from basic generation to complex API development.

- Beyond Just Code: It’s the philosophy of Code Llama that truly shines. It’s designed not just to code, but to understand the essence behind each task.

- The Future of Coding: Tools like Code Llama are reshaping the coding landscape. Their potential to streamline and enhance the coding process is undeniable.

- Experience It Firsthand: Don’t just take our word for it. Dive into the Code Llama experience on poe.com and see the magic for yourself.

Read More : How To Install Code Llama LOCALLY

Discussion about this post