Ever wondered if there’s an AI that can keep up with the big names in tech? Here comes Mistral AI, a new player with big ambitions. They’ve cooked up something called Mistral Large, and it’s turning heads.

Will it shake up the world of AI as we know it? Let’s zoom in and see what the buzz is all about.

1 What is Mistral AI?

Mistral AI is a cutting-edge artificial intelligence firm based in France, launched in April 2023 by an impressive team of former Meta Platforms and Google DeepMind experts. In a short span of

time, Mistral AI has established itself as a key player in the AI field, securing a remarkable 385 million euros in funding by October 2023 and achieving a valuation exceeding $2 billion by

December of the same year. The company specializes in developing advanced language models, such as the notable Mistral Large, aiming to democratize AI technology with its innovative, high-performing, and accessible AI solutions.

2 Comprehensive List of Mistral AI Models :

Open Models: Empowering the AI Community

Mistral 7B: Picture this as the entry point into Mistral AI’s world – a versatile 7B transformer model that’s all about customization and rapid deployment. Whether you’re tackling text generation, language translation, or any other use case, Mistral 7B is designed to be your go-to, offering a solid foundation with the flexibility to adapt to your specific needs.

Mixtral 8x7B: Now, if you’re craving more power and efficiency, Mixtral 8x7B steps up the game. It’s not just any model; it’s a 7B sparse Mixture-of-Experts (SMoE) leveraging 12B active parameters out of a whopping 45B. Operating under the Apache 2.0 License, this model is a testament to Mistral AI’s commitment to open-source innovation, providing an unparalleled blend of performance and accessibility.

Optimized Models: Designed for Performance

Mistral Embed: For those delving into the nuances of text representation, Mistral Embed offers state-of-the-art semantic extraction capabilities. Scoring 55.26 on the Massive Next Embedding Benchmark (MTEB), it’s engineered to provide deep insights into text data, enabling applications to understand and process language with unprecedented accuracy.

Mistral Small: When time is of the essence, and you need snappy responses, Mistral Small is your ally. Optimized for cost-efficiency and low-latency workloads, this model ensures that your applications run smoothly and swiftly, without breaking the bank. It’s the optimal choice for developers looking to balance speed and expenditure.

Mistral Large: Mistral AI’s flagship, Mistral Large, excels in reasoning within complex, multilingual environments. Fluent in five languages and equipped with a 32K tokens context window, it ensures detailed, context-rich text generation. Incorporating retrieval augmented generation (RAG), it enhances its comprehension by accessing external knowledge, supporting advanced function-calling for tailored app development. Exceptional in coding, math, and reasoning tasks, Mistral Large embodies Mistral AI’s innovation, revolutionizing natural language processing to address today’s AI challenges efficiently.

3 Key Features of Mistral Large :

What sets Mistral Large apart? Let’s break it down:

- Language Fluency: It’s not just about understanding text; it’s about grasping the subtleties of language. Mistral Large is natively fluent in English, French, Spanish, German, and Italian, making it a polyglot in the world of AI. This fluency extends to its nuanced understanding of grammar and cultural contexts, allowing it to perform tasks with a level of sophistication that mirrors human understanding.

- Context Window Size: With a 32K tokens context window, Mistral Large can recall and utilize vast amounts of information from large documents. This capability is essential for tasks requiring deep knowledge and context, from text analysis to generating detailed reports.

- Function Calling and JSON Output: At the intersection of AI and software development, Mistral Large shines with its native ability for function calling and outputting data in JSON format. This makes it not just an AI model but a tool for developers to build complex applications, enabling seamless integration into tech stacks and modernizing application development.

- Precision in Instruction-Following and Moderation Policies: Mistral Large doesn’t just follow instructions; it follows them with precision. This precision is crucial for developers looking to design specific moderation policies or ensure that the AI’s outputs align closely with user intent.

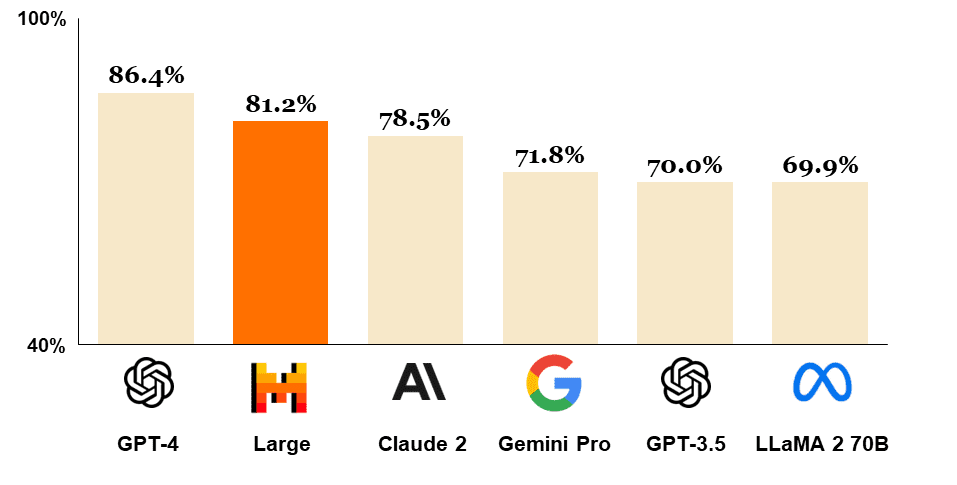

4 Mistral Large Performance and Benchmarks :

5 Mistral Large Pricing and Access :

- For Mistral Large, you’re looking at $8.00 per million tokens for input and $24.00 for output.

- In comparison, the open-mistral-7B starts at a more accessible $0.25 per million tokens for both input and output.

This flexible pricing ensures that whether you’re running low-latency applications with Mistral Small or leveraging the high-complexity reasoning of Mistral Large, you’re getting a competitive rate.

Accessing Mistral Large is streamlined through La Plateforme, Mistral’s secure European-hosted developer hub, which offers an array of models and resources to build sophisticated applications.

Alternatively, Azure users can integrate Mistral Large with the familiar tools and services of Azure AI Studio and Azure Machine Learning for a seamless experience.

For those requiring utmost privacy and control, self-deployment options are available, allowing you to run Mistral models within your own infrastructure.

With Mistral Large, affordability meets accessibility, bringing frontier AI within reach of innovators everywhere. Whether you’re iterating quickly with Le Chat or deploying at scale, Mistral AI provides the flexibility and power to elevate your AI projects.

6 Testing Mistral Large :

We’ll conduct a series of tests to evaluate Mistral Large, utilizing Le Chat . Each scenario is crafted to measure the model’s specific abilities in coding, and logic.

Coding Challenge: The Classic Snake Game

Prompt: “Create a Python program that simulates the classic Snake game, including the game loop, snake movement, food spawning, and collision detection.”

Response :

Results :

Mistral Large successfully completed the Snake Game Coding Challenge, providing a functional script that implements the classic game using the curses library for terminal-based graphics and user input.

Logical Reasoning: The Fox, Goose, and Bag of Beans Puzzle

Prompt: “You are at a river with a fox, a goose, and a bag of beans. You have a boat that can only carry one item besides yourself. If left alone, the fox will eat the goose, and the goose will eat the beans. Devise a plan to get all three across the river without any harm.”

Response :

In the logic test featuring the classic “fox, goose, and bag of beans” puzzle, Mistral Large successfully provided a correct and logical sequence of actions that ensured all items reached the other side of the river safely. The test was conducted accurately, confirming Mistral Large’s ability to process and solve complex logic puzzles.

Problem Solving:

In our next test, we will be using the prompt that was previously utilized in the evaluation of Gemini Advanced:

“Today I own three cars, but last year I sold two cars. How many cars do I own today?”

Mistral Large’s response to this prompt was accurate, demonstrating its proficiency in understanding and addressing the present context irrespective of past events. We aim to retest this prompt to validate consistency and compare performance. This serves as a benchmark to measure how Mistral Large maintains its logical reasoning in comparison to other models like Gemini Advanced.

7 Use Cases and Applications :

Mistral Large, as showcased by its impressive performance on various benchmarks, is poised to revolutionize several industries through its advanced language understanding and generation capabilities. With its state-of-the-art semantic extraction, multilingual support, and coding proficiency, Mistral Large can significantly impact sectors like software development, education, and research.

Software Development: Developers can harness Mistral Large to write and debug code more efficiently, leveraging its top-tier reasoning to navigate complex problem-solving scenarios. Its ability to generate code and perform human-like evaluations can streamline the development process, fostering innovation and accelerating the creation of robust applications.

Education: In educational settings, Mistral Large can serve as an advanced teaching assistant, providing personalized learning experiences and comprehensive content summaries. Its multilingual abilities make it an invaluable tool for language learning and cultural studies, while its reasoning skills can help explain complex concepts in science, technology, engineering, and mathematics (STEM).

Research: For researchers, Mistral Large’s ability to process and recall information from extensive documents can aid in literature reviews and data analysis. Its precise instruction-following and JSON output format are ideal for managing large datasets and extracting insights, propelling research projects forward with greater accuracy and efficiency.

By integrating Mistral Large, industries can leverage AI to not just automate tasks but also to create innovative solutions that were previously unattainable.

Whether it’s through enhancing customer service bots, developing intelligent educational tools, or supporting cutting-edge research, Mistral Large is equipped to drive growth and transformation across various sectors.

8 Conclusion :

Mistral Large has shown impressive capabilities, demonstrating strong reasoning and multilingual proficiency in our tests. While slightly trailing behind GPT-4, it presents a more cost-effective solution without significant compromise on performance.

Its accessibility through La Plateforme and Azure, along with self-deployment options, offers flexibility for various applications. Mistral Large stands as a valuable asset for innovation in AI, promising to enhance a range of industries with advanced AI integration.